I’m using this blog to release Docmatix. This is a huge dataset for previously available 100 times larger document visual questions (docvqa). We show that using this dataset to fine-tune this dataset will increase performance in DOCVQA by 20%.

Example dataset

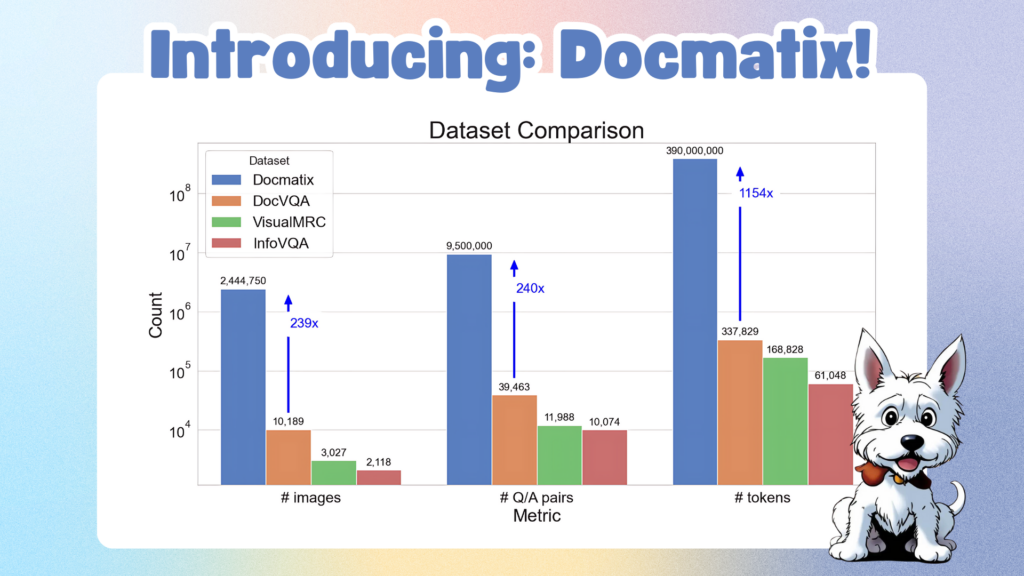

First, when I developed Cauldron, an extensive collection of 50 datasets for Visual Language Models (VLM), especially for IDEFICS2, I had the idea to create a Docmatix. Through this process, we identified a large gap in the availability of large document visual questions (DOCVQA) datasets. The main dataset relied on IDEFICS2 is DOCVQA, which contains 10,000 images and 39,000 question answers (Q/A) pairs. With this and other datasets, open source models maintain a large performance gap with closed source models. To address this limitation, we are excited to introduce Docmatix, a DOCVQA dataset with 9.5 million Q/A pairs derived from 2.4 million images and 1.3 million PDF documents. Compared to previous datasets, the scale increases by 240 times.

Compare docmatix with other docvqa datasets

Here you can explore your dataset yourself and see the types of documents and question answer pairs that Docterix contains.

Docmatix is generated from PDFA, an extensive OCR dataset that contains 2.1 million PDFs. The PHI-3-Small model was adopted to collect transcription from PDFA and generate Q/A pairs. To ensure the quality of the dataset, generations were filtered and 15% of Q/A pairs identified as hallucinations were discarded. To that end, I used regular expressions to detect the code and removed the answers that contained the “unanswered” keyword. The dataset contains rows for each PDF. I converted PDFs to images at a resolution of 150 dpi and uploaded processed images to a face hub to make them easy to access. All original PDFs in Docmatix can go back to the original PDFA dataset and provide transparency and reliability. Still, converting many PDFs into images can be resource intensive, so I conveniently uploaded processed images.

Process Pipeline to generate Docmatix

After processing the first small batch of datasets, several ablation studies were conducted to optimize the prompts. We aimed to generate approximately four sets of Q/A per page. Too many pairs indicate a large overlap between them, while too few pairs suggest a lack of details. Furthermore, we aimed to make the answers human-like and avoided excessively short or long responses. We also prioritized diversity in questions to ensure minimal repetition. Interestingly, when I guided through the PHI-3 model and asked questions based on specific information in the document (e.g. “What is John Doe’s title?”), the question showed little repetition. The following plot shows some important statistics from the analysis.

Docmatix analysis by prompt

To assess the performance of Docmatix, ablation studies were conducted using the Florence-2 model. For comparison, I trained two versions of the model. The first version was trained with several epochs on the DOCVQA dataset. The second version was trained on Docmatix for one epoch (20% of images and 4% of Q/A pairs) followed by one epoch for DOCVQA, confirming that the model generated the correct format for DOCVQA evaluation. The results are important. Training on this small part of Docmatix gave me almost 20% relative improvement. Furthermore, the 0.7B Florence-2 model was only 5% inferior to the 8B IDEFICS2 model trained with a mix of datasets.

DOCVQA Model Size Dataset ANSL Florence 2 docvqa 60.1 700m Florence 2 florence 2 Docmatix 71,4 700M IDEFICS2 74,0 8B

Conclusion

In this post I presented docmatix, a huge dataset for docvqa. Using Docmatix, we showed that gaining Florence-2 could increase DOCVQA performance by 20%. This dataset should help bridge the gap between your own and open source VLMs. The open source community is encouraged to leverage Docmatix and train new, amazing DOCVQA models! Can’t wait to see your model on Hub!

Useful resources

Thanks to Merve and Leo for reviews and thumbnails for this blog.