Austin, Texas – The next time you vote, you may make a decision based on the political ads you see. But with advances in artificial intelligence, how can we ensure that everything in our ads is legal?

Experts have warned that AI is poseping major challenges for elections, with manipulated media trying to use modified images to shake up voters.

Handling misinformation generated by AI

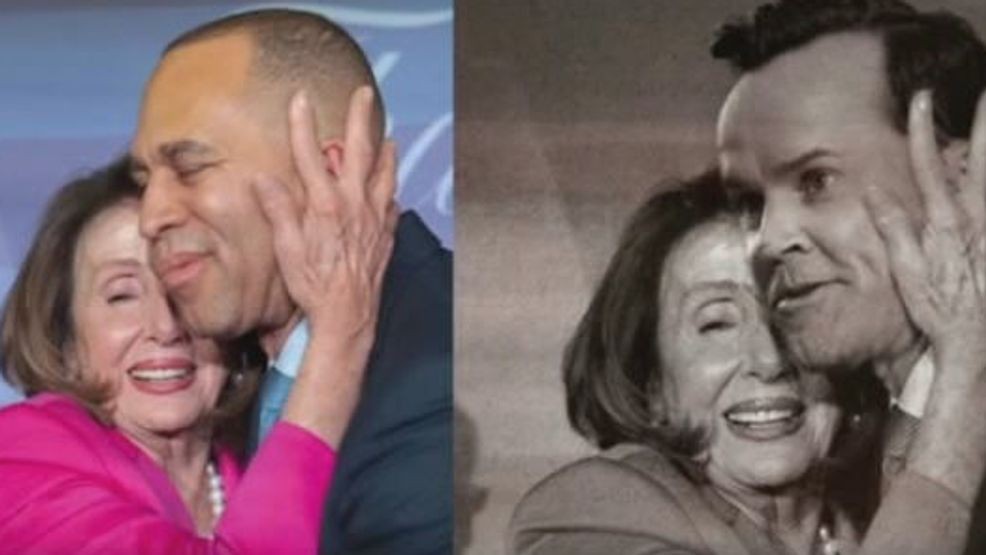

Texas leaders are increasingly concerned about the impact of AI on elections at all levels. About a year after former Texas House Speaker Dade Phelan shows Nancy Pelosi hugging him, he is now proposing laws cracking down on AI in political ads.

House Building 366 requires political ads featuring modified images, videos, or audio recordings to disclose that the content “did not occur in reality.”

“These technologies are weaponized to mislead voters, distort reality and mis portray candidates,” Phelan told the hearing committee on the bill.

The law aims to keep pace with AI

The bill does not prohibit AI-generated content in the campaign, but requires transparency through a clear disclaimer.

The Texas Ethics Committee determines the wording and appearance parameters of the disclaimer.

Related | Melania Trump supports bills that fight off unconsensual sharing of sexual images online

Phelan emphasized that the law does not target casual social media content.

“I’m not here because of your meme,” he said at the committee meeting. “This has nothing to do with X, Facebook, or anything on social media.”

Jon Taylor, UTSA Chairman of Politics, says he’s not surprised that lawmakers want to tackle the issue.

“Technology is constantly driving away the ability of law to keep up with it. This is one example of that. AI is jumping exponentially more than the ability of law and politicians, elected officials and policymakers to handle it,” Taylor said.

Extensive support for AI disclosure

Political lawyer Andrew Cates, who testified on the issue, noted that many states have already adopted similar measures.

“People know because they literally slap the disclaimer there to say that AI was used, and that’s what a lot of states are already doing,” Cates said.

Currently, around 19 states are implementing AI disclosure laws for political advertising, Cates said there are around 12 people taking into account similar measures.

Related | The rise of AI: wonder and worry

Texas was actually the first state to criminalize some deepfakes in 2019.

Because of that law, you could win a year and a fine of up to $4,000 in the county jail, but only 30 days before the election, if deep fakes are in the form of videos.

The latest AI proposal for elections, HB 366, will ow the Class A misdemeanor punishment for not disclosing AI elements in political ads, but some supporters argue that penalties should be increased to felony.

“It’s probably more protective changes they’ll make to the future,” Cates said. “AI is just getting better, more realistic, more confusing. I think we’re beginning to think about what’s coming in the campaign cycle, especially after such a controversial speaker election.”

AI regulations that go beyond political ads

Texas legislators are also working on other AI-related issues in the session. One of their top priorities is addressing explicit AI-generated content.

The Texas Senate has already unanimously passed a bill extending the definition of “child pornography,” including content created by AI, reflecting broader concerns about how artificial intelligence can be used to exploit or mislead the public.