Cadence Design Systems announced two AI-related collaborations at this week’s CadenceLIVE event, expanding its work with Nvidia and introducing new integration with Google Cloud. Nvidia’s partnership focuses on combining AI with physically-based simulation and accelerated computing for robotic systems and system-level design.

The companies said the approach targets modeling and deployment in semiconductors and large-scale AI infrastructure, including robotic systems, which NVIDIA calls physical AI.

Cadence integrates multiphysics simulation and system design tools with Nvidia’s CUDA-X library, AI models, and Omniverse-based simulation environments. The tool models thermal and mechanical interactions, allowing engineers to evaluate how the system will behave under real-world operating conditions. It also goes beyond chip design to cover infrastructure components such as networks and power systems. An integrated platform allows engineers to simulate system behavior before physical deployment. The companies said the system’s performance will be determined by how the computing, networking and power systems work together.

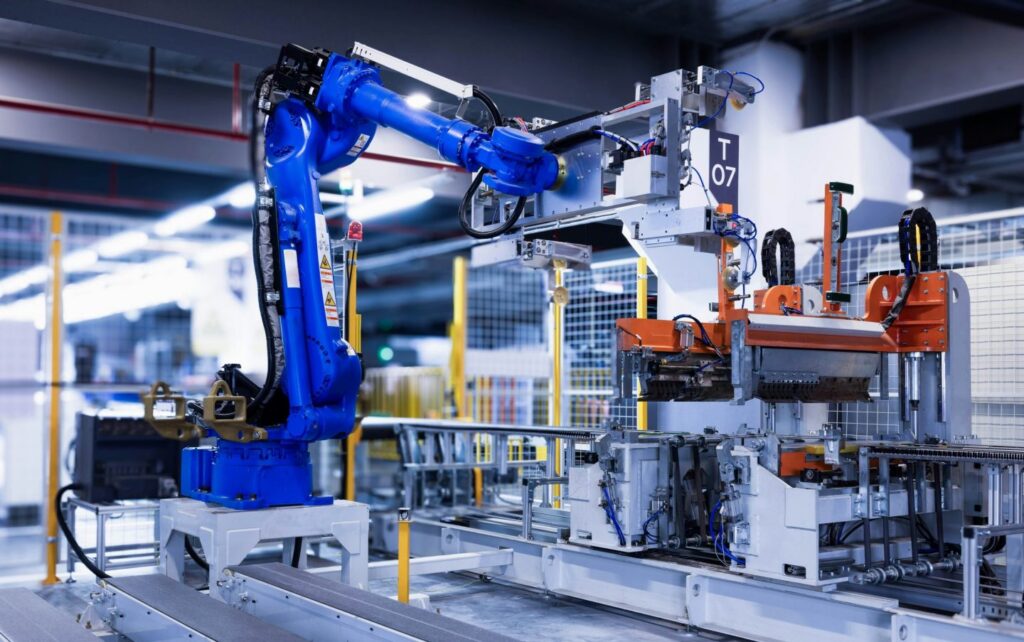

The partnership also includes robot development. Cadence’s physics engine, which models how real-world materials interact, is linked with Nvidia’s AI models used to train AI-powered robotic systems in simulated environments.

“We’re working with you on the Robotics Systems Board of Directors,” Nvidia CEO Jensen Huang said during the event.

Training robots in simulation reduces the need for real-world data collection. The companies said these datasets are not collected from physical systems and must be generated using physically-based models. The datasets generated by the simulation are used to train the model, and the results depend on the accuracy of the underlying physical model.

“The more accurate[the training data generated]the better the model will be,” said Cadence CEO Anirud Devgn.

Nvidia says industrial robotics companies are using its Isaac simulation framework and Omniverse-based digital twin tools to test robotic systems before deployment. Companies such as ABB Robotics, FANUC, YASKAWA, and KUKA are integrating these simulation tools into their virtual commissioning workflows to test production systems in software before physical deployment.

Nvidia said these systems are used to model complex robot movements and entire production lines using physically accurate digital environments.

Chip design automation on the cloud

Separately, Cadence has introduced a new AI agent designed to automate later-stage chip design tasks. This agent focuses on the physical layout process and translates the circuit design into silicon implementation. This release builds on the previous agent introduced this year for front-end chip design, where circuits are defined in code-like descriptions. While previous systems handled circuit designs, the new agent focuses on converting those designs into physical layouts on silicon.

This system will be available through Google Cloud. Cadence says the integration combines its electronic design automation tools with Google’s Gemini model to automate design and verification workflows. Cloud deployments allow teams to run these workloads without relying on on-premises computing infrastructure.

Cadence’s ChipStack AI Super Agent platform uses native design tools and model-based reasoning to coordinate tasks across multiple design stages. The system can interpret design requirements and automatically perform tasks at various stages of the design process.

Cadence has reported up to 10x productivity gains for initial implementations of design and verification tasks. The company did not disclose specific customer implementations.

“We help build AI systems, and those AI systems can help improve the design process,” Devgan said.

The companies said simulation tools are being used to validate the system in a virtual environment before physical deployment. Digital twin models allow engineers to test design tradeoffs, evaluate performance scenarios, and optimize software configurations.

They added that the cost and complexity of large data center infrastructure limits the use of trial-and-error deployment methods.

Presentation of quantum model

In a separate announcement, Nvidia introduced a family of open-source quantum AI models called NVIDIA Ising. The model is named after the Ising model, a mathematical framework used to represent interactions within physical systems.

The model is designed to support quantum processor calibration and quantum error correction. Nvidia says this model delivers up to 2.5x faster performance and 3x more accuracy in the decoding process used for error correction.

“AI is essential to making quantum computing practical,” Huang said. “With Ising, AI becomes the control plane, or operating system, of quantum machines, transforming fragile qubits into scalable and reliable quantum GPU systems.”

(Photo provided by Homa Appliance)

See also: Hyundai expands into robotics and physical AI systems

Want to learn more about AI and big data from industry leaders? Check out the AI & Big Data Expos in Amsterdam, California, and London. This comprehensive event is part of TechEx and co-located with other major technology events. Click here for more information.

AI News is brought to you by TechForge Media. Learn about other upcoming enterprise technology events and webinars.