Over the past decade, we’ve laid much of the foundation for the modern AI era, from pioneering the Transformer architecture on which all large-scale language models are based, to developing agent systems that can learn and plan like AlphaGo and AlphaZero.

We have applied these technologies to make breakthroughs in quantum computing, mathematics, life sciences, and algorithm discovery. And we continue to double the breadth and depth of our basic research, working to invent the next big breakthroughs needed for artificial general intelligence (AGI).

That’s why we’re working to extend Gemini 2.5 Pro, our premier multimodal foundation model, to become a “world model” that, like your brain, can understand aspects of the world, simulate them to plan, and imagine new experiences.

We’ve been making progress in this direction for some time, from pioneering on-the-job training agents for mastering complex games like Go and StarCraft, to building Genie 2, which can generate 3D simulated environments that can be manipulated from a single image prompt.

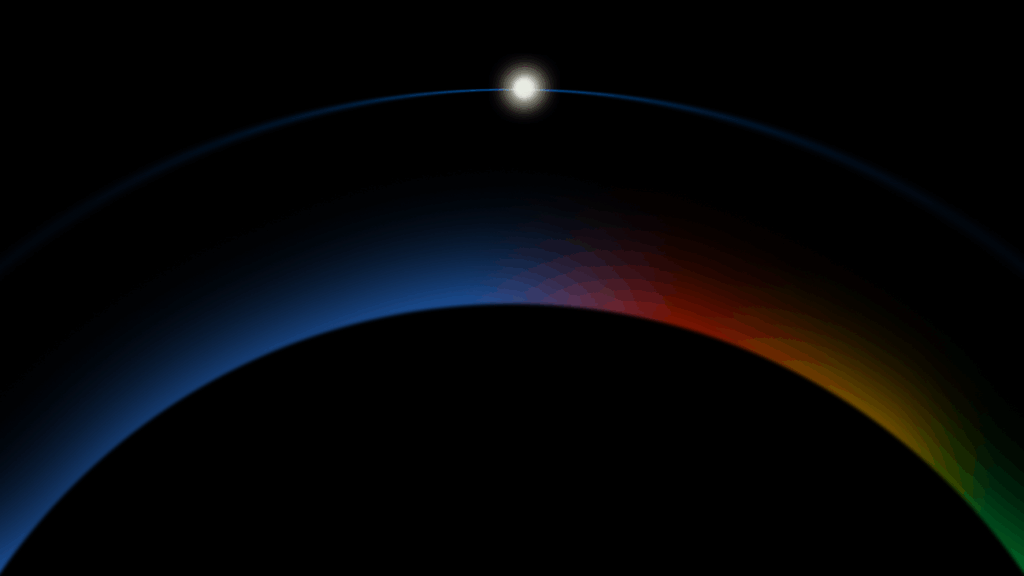

Already, evidence of these abilities is visible in Gemini’s ability to use world knowledge and reasoning to represent and simulate natural environments, Veo’s intuitive deep understanding of physics, and the way Gemini Robotics teaches robots how to grasp, follow instructions, and adjust on the fly.

Making Gemini a global model is an important step in developing a new kind of AI that is more common and useful: a universal AI assistant. This is intelligent AI that understands your situation and can plan and take actions on your behalf on any device.