We are excited to announce that the NVIDIA NeMo Retriever team has developed a new agent acquisition pipeline and has officially secured the #1 spot on the ViDoRe v3 pipeline leaderboard. Additionally, this very same pipeline architecture earned the #2 spot on the demanding, inference-focused BRIGHT leaderboard.

In the rapidly evolving landscape of AI search, many solutions are highly specialized and designed to perform very well at specific narrow tasks. However, real-world enterprise applications rarely have the luxury of fully curated, single-domain data. You need a system that can seamlessly adapt to a variety of challenges, from parsing complex visual layouts to performing deep logical reasoning.

That’s why we prioritized versatility in our design. We built an agent pipeline that dynamically adapts search and inference strategies to the data at hand, rather than relying on dataset-specific heuristics. This allows us to deliver state-of-the-art performance across significantly different benchmarks without requiring changes to the underlying architecture.

Here’s a look at how we built it.

Motivation: Why semantic similarity alone is not enough

For many years, dense searches based on semantic similarity have been the standard for information retrieval. However, as the use of search expands, finding relevant documents goes beyond just semantic similarity. Complex document searches require reasoning skills, understanding of real-world systems, and iterative exploration.

There is a fundamental gap. LLMs are great for thinking and reasoning, but they can’t process millions of documents at once. Conversely, retrievers can easily sift through millions of documents, but their reasoning skills are limited. Agent acquisition bridges this gap by creating an active iterative loop between the LLM and the acquirer.

How it works: Agent loop

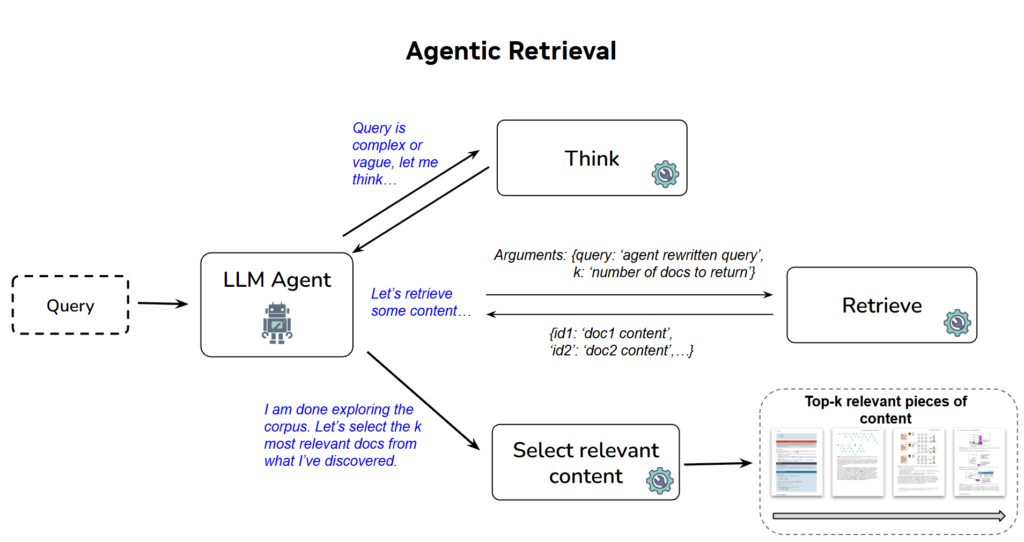

Agent acquisition pipeline overview

Our agent acquisition pipeline relies on the ReACT architecture. Instead of a single “one-time” query, the agent iteratively searches, evaluates, and refines its approach.

The agent utilizes built-in tools such as think to plan its approach and utilizes final_results to output the exact documentation needed for a particular query. We also utilize retrieval (query, top_k) tools to explore the corpus. Through this loop, we found that successful search patterns emerge naturally.

Generate better queries: The agent dynamically adjusts search queries based on newly discovered information. Persistent Re-expression: Continuously re-express the query until useful information is found. Analyze complexity: Transform complex multi-part queries into multiple simpler queries with clear goals.

Finally, to synthesize the iterative detection, the agent calls the Final_results tool to output the most relevant documents ranked by their relevance to a given query. As a safety net, for example, if the agent reaches a maximum number of steps or context length limit, the pipeline falls back to Reciprocal Rank Fusion (RRF). RRF scores documents based on their rank across all retrieval attempts in the agent’s trajectory.

Engineering for speed and scale

Agent workflows are notoriously time-consuming and resource-intensive. To be able to run this pipeline for leaderboard-scale evaluations, we had to rethink how LLM agents and acquirers communicate.

Initially, acquirers were exposed to agents through the Model Context Protocol (MCP) server. MCP was a natural choice as it is precisely designed to provide LLMs with access to external tools. In practice, however, this architecture placed a compounding burden on the speed of experimentation. Each run required starting a separate MCP server, loading the appropriate dataset corpus into GPU memory, and coordinating both the client and server lifecycles. Each of these steps was an opportunity for a silent misconfiguration or a server freeze due to a large number of requests. Network round-trips added latency to every retrieval call, and the overall cognitive burden of managing two process setups made it significantly more difficult for other teams to adopt and iterate on in the pipeline.

To solve this, we replaced the MCP server with a thread-safe singleton retriever that resides within the process. Singletons load the model and corpus embeddings once, secure all access with reentrancy locks, and expose the same Revit() interface to any number of concurrent agent tasks. This provides the main benefits of the MCP server (secure, shared access to GPU-resident retrievers from multiple threads) without the overhead of network serialization or the need for a separate server process. This single architectural change eliminated an entire class of deployment errors and dramatically increased both GPU utilization and experimental throughput.

Generalization and specialization across benchmarks

A common finding in modern search evaluation is that solutions that are highly optimized for a specific type of task often result in performance gaps when applied to a completely different domain.

Pipeline ViDoRe v3 NeMo Agent Retrieval (Opus 4.5 + nemotron-colembed-vl-8b-v2) 69.22 (#1) Intense Retrieval (nemotron-colembed-vl-8b-v2) 64.36 INF-X-Retriever (INF-Query-Aligner + nemotron-colembed-vl-8b-v2) 62.31 INF-X Retriever 51.01

Pipeline BRIGHT INF-X-Retriever 63.40 (#1) NeMo Agentic Retrieval (Opus 4.5 + nemotron-reasoning-3b) 50.90 (#2)

We placed 2nd on the deduction-focused BRIGHT leaderboard with NDCG@10 50.90. INF-X-Retriever, the number one solution on that leaderboard, achieved an impressive 63.40. However, to test its cross-domain adaptability, we benchmarked the INF-X pipeline (combined with the same nemotron-colembed-vl-8b-v2 embedding model used in the agent pipeline) on ViDoRe v3, a dataset focused on visually rich and diverse corporate documents. In this different task, its performance reached NDCG@10 62.31, which was lower than the dense search score of 64.36. In other words, INF-Query-Aligner does not improve over the ViDoRe v3 dense search baseline.

In contrast, the same agent pipeline (a combination of Opus 4.5 and nemotron-colembed-vl-8b-v2) took first place in ViDoRe v3 with a score of 69.22.

This highlights a core strength of our approach: generalizability. Rather than relying on dataset-specific heuristics or query rewriters/aligners, agent loops naturally adapt strategies to the dataset at hand, whether it requires multi-step logical reasoning or parsing complex visual layouts.

Ablation research: open and closed models

vidre v3

Agent Embedded Model NDCG @10 Average Seconds/Query Total Input Tokens (M) Total Output Tokens (M) Average Get Calls Opus 4.5 nemotron-colembed-vl-8b-v2 69.22 136.3 1837 15 9.2 gpt-oss-120b nemotron-colembed-vl-8b-v2 66.38 78.6 1860 13 2.4 gpt-oss-120b llama-nemotron-embed-vl-1b-v2 62.42 78.1 1459 13 2.5 – nemotron-colembed-vl-8b-v2 64.36 0.67 – – – – llama-nemotron-embed-vl-1b-v2 55.83 0.02 – – –

bright

Agent Embedded Model NDCG @10 Average Seconds/Query Total Input Tokens (M) Total Output Tokens (M) Average Retrieval Calls Opus 4.5 llama-embed-nemotron-reasoning-3b 50.79 148.2 1251 11 11.8 gpt-oss-120b llama-embed-nemotron-reasoning-3b 41.27 92.8 1546 11 4.5 gpt-oss-120b llama-nemotron-embed-vl-1b-v2 33.85 139.1 1516 12 6.6 – llama-embed-nemotron-reasoning-3b 38.28 0.11 – – – -rama-nemotron-embed-vl-1b-v2 19.56 0.08 – – –

We performed extensive ablation to understand the trade-offs between the frontier closed model and the open weight alternative model.

Model selection: In ViDoRe v3, replacing Opus 4.5 with open gpt-oss-120b resulted in a slight decrease in accuracy (69.22 to 66.38 NDCG@10) and a significant reduction in the number of acquisition calls. For BRIGHT, the difference is even larger, showing that deeper inference tasks still benefit greatly from frontier models like Opus 4.5. Embeddings: We obtained the best results when combining the agent with specialized embeddings (nemotron-colembed-vl-8b-v2 for ViDoRe and llama-embed-nemotron-reasoning-3b for BRIGHT), proving that the powerful baseline retrieval capabilities give the agent a higher bound. It is also interesting to note that agents can bridge the gap between stronger and weaker embedding models. For example, in ViDoRe, in dense search, the difference between the more powerful nemotron-colembed-vl-8b-v2 and the weaker llama-nemotron-embed-vl-1b-v2 is about 8.5, but when combined with the gpt-oss-120b agent, that difference reduces to about 4. Similarly, llama-embed-nemotron-reasoning-3b outperforms it by about 19 points. In BRIGHT it’s llama-nemotron-embed-vl-1b-v2, but when combined with the gpt-oss-120b agent the lead shrinks to about 7.5.

The cost of autonomy and the future

There is no free lunch. Agent-based acquisition is more expensive and time-consuming than standard high-density acquisition. Looking at the ViDoRe v3 results, the agent takes an average of 136 seconds per query and consumes approximately 760,000 input tokens and 6.3,000 output tokens per query. (Note: Sequential latency is measured on a single A100 GPU using a single concurrent Claude API call to reflect the actual search time in a real-world use case (i.e. not searching multiple queries at the same time).

However, we believe agent retrieval is a very viable approach for high-stakes complex queries. Our immediate next steps are focused on cost reduction. We are actively investigating ways to distill these agent inference patterns into smaller, specialized open-weight agents. By fine-tuning small-scale models and natively tuning the thought and acquisition loops, we aim to achieve Opus-level accuracy at fraction of the latency and cost.

Build your own agent pipeline

For a run to the top of the leaderboard, we considered combinations such as Claude Opus and gpt-oss alongside research embedded models, but the real strength of this architecture is its modularity. For production-ready deployments, we highly recommend pairing your agent of choice with the robust commercial embedding model llama-nemotron-embed-vl-1b-v2. To explore these models, delve into the tools, and start building your own highly generalizable retrieval workflow, visit the NeMo Retriever Library today.