(Updated July 24, 2023: Added Llama 2.)

Text generation and conversation technology has been around for a long time. Previous challenges in using these technologies have been controlling both text consistency and diversity through inference parameters and identification biases. Consistent output sounds less creative, closer to the original training data, and less human. Recent developments have overcome these challenges, allowing anyone to try out these models with a user-friendly UI. Services like ChatGPT have recently put a spotlight on powerful models like GPT-4 and sparked the explosion of open source alternatives like Llama into the mainstream. These technologies will be around for a long time and will become increasingly integrated into everyday products.

This post is divided into the following sections:

A Brief Background on Text Generation The Hugface Ecosystem Licensed Tool for LLM Service Delivery Parameter Efficient Fine-Tuning (PEFT)

A brief background on text generation

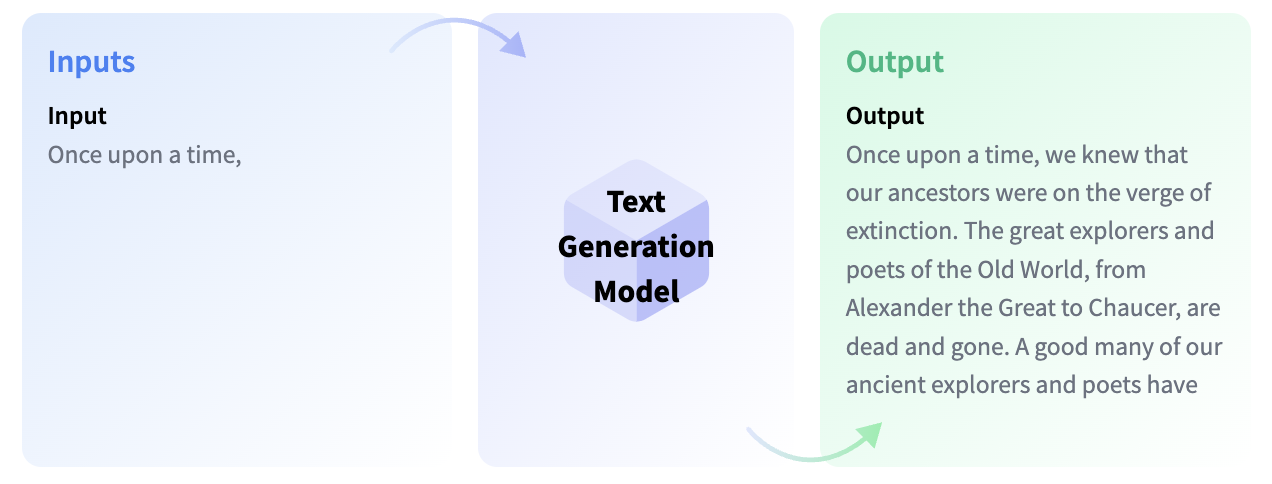

Text generation models are essentially trained to complete incomplete text or to generate text from scratch in response to given instructions or questions. Models that complete incomplete text are called Causal Language Models, and famous ones include OpenAI’s GPT-3 and Meta AI’s Llama.

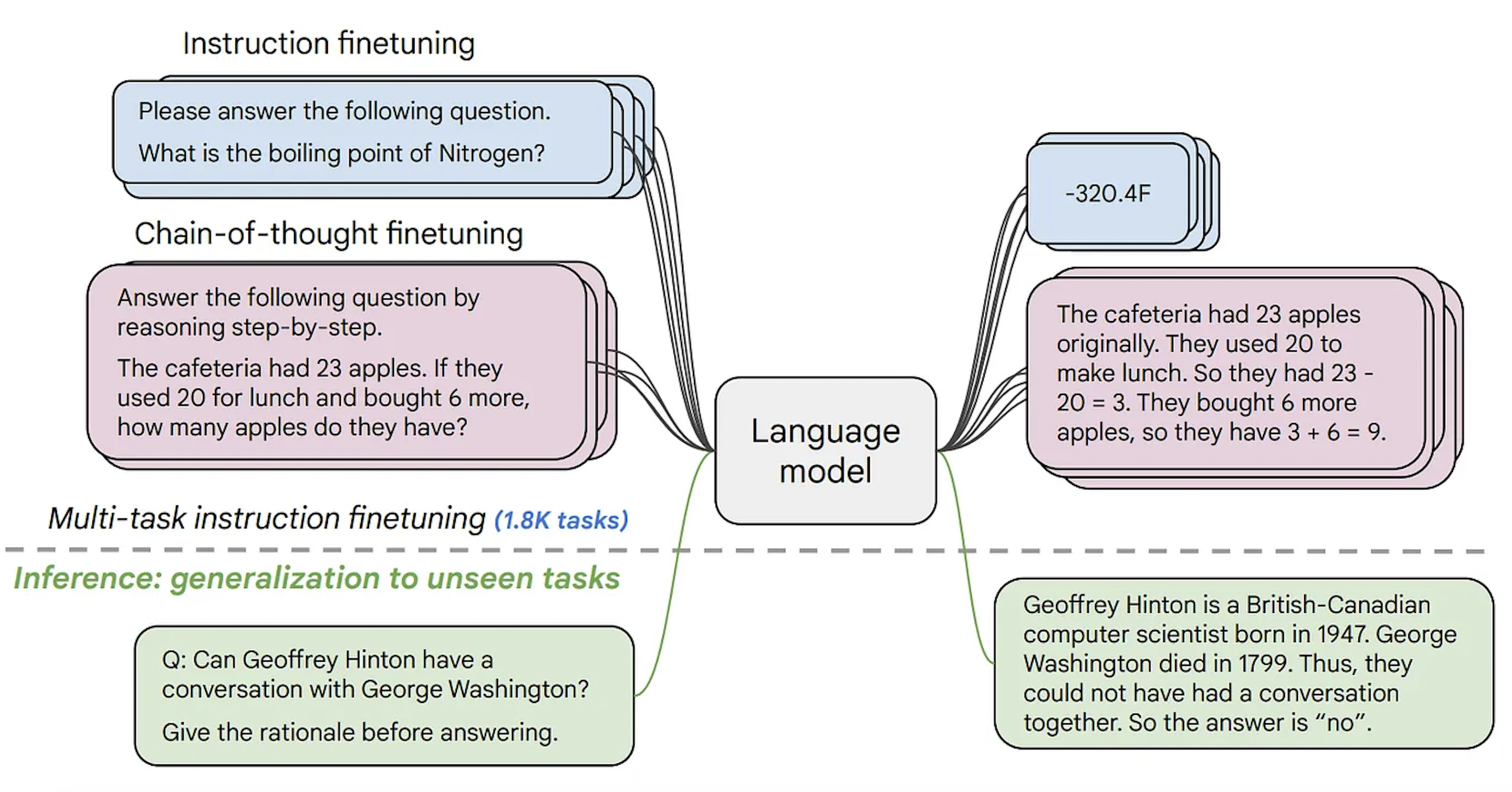

One of the concepts you need to know before moving on is fine-tuning. This is the process of taking a very large model and transferring the knowledge contained in this base model to another use case, which is called a downstream task. These tasks may be provided in the form of instructions. As the size of the model increases, it can better generalize instructions that are not present in the pre-training data but were learned during fine-tuning.

Causal language models are adapted using a process called reinforcement learning from human feedback (RLHF). This optimization is primarily based on how natural and coherent the text sounds, rather than the plausibility of the answer. An explanation of how RLHF works is beyond the scope of this blog post, but you can learn more about this process here.

For example, while GPT-3 is a causal language-based model, the backend model for ChatGPT (the UI for GPT series models) is fine-tuned through RLHF with prompts consisting of conversations and instructions. It is important to distinguish between these models.

In the Hugging Face Hub, you can find both causal language models and causal language models that are fine-tuned based on your instructions (I will provide links later in this blog post). Llama was one of the first open source LLMs to outperform closed source LLMs. A research group led by Together created a replica of Llama’s dataset, called Red Pajama, and trained a model on it with fine-tuned LLM and instructions. Click here for more information. You can also find model checkpoints on the Hugging Face Hub. At the time this blog post is written, three of the largest causal language models with open source licenses are MosaicML’s MPT-30B, Salesforce’s XGen, and TII UAE’s Falcon, which are fully openly available on Hugging Face Hub. Recently, Meta released Llama 2, a licensed open access model that allows commercial use. To date, Llama 2 is outperforming all other open source large-scale language models on a variety of benchmarks. Hugging Face Hub’s Llama 2 checkpoints are compatible with Transformers, and the largest checkpoints are available for anyone to try on HuggingChat. This blog post details how to fine-tune, deploy, and prompt Llama 2.

The second type of text generation model is commonly referred to as a text-to-text generation model. These models are trained on text pairs, such as questions and answers or instructions and responses. The most popular ones are T5 and BART (not the most advanced at the moment). Google recently released the FLAN-T5 series of models. FLAN is a recent technology developed for instruction fine-tuning, and FLAN-T5 is essentially a fine-tuned version of T5 using FLAN. At this time, the FLAN-T5 series models are state-of-the-art open source and available on Hugging Face Hub. Note that although the input and output formats appear similar, these are different from the imperative-coordinated causal language model. Below is how these models work.

A growing variety of open source text generation models allows companies to keep data private, quickly adapt models to their domain, and reduce inference costs, rather than relying on closed, paid APIs. All open source causal language models on Hugging Face Hub can be found here and text-to-text generation models can be found here.

Model created with love by Hugging Face using BigScience and BigCode 💗

Hug Face has co-led two scientific initiatives: BigScience and BigCode. As a result, we created two large language models: BLOOM 🌸 and StarCoder 🌟. BLOOM is a causal language model trained in 46 languages and 13 programming languages. It is the first open source model with more parameters than GPT-3. All available checkpoints can be found in the BLOOM documentation.

StarCoder is a language model trained on GitHub’s forgiving code (80+ programming languages 🤯) with a Fill-in-the-Middle purpose. Since it is not fine-tuned based on instructions, it serves as a coding assistant to help you complete specific code, such as converting Python to C++, explaining concepts (what is recursion?), or acting as a terminal. This application allows you to try out all StarCoder checkpoints. It also comes with a VSCode extension.

Snippets for using any model mentioned in this blog post can be found in the model repository or on the Hugging Face documentation page for that model type.

license

Many text generation models are closed source or have licenses that restrict commercial use. Fortunately, open source alternatives are starting to emerge and are accepted by the community as building blocks for further development, tweaking, or integration with other projects. Below is a list of some of the large causal language models that are fully open source licensed.

There are two code generation models: BigCode’s StarCoder and Salesforce’s Codegen. There are model checkpoints of various sizes, and both have open source or open RAIL licenses, except for Codegen, which is fine-tuned based on instructions.

Hugging Face Hub also hosts a variety of models tweaked for coaching and chatting. We offer a variety of styles and sizes to suit your needs.

Mosaic ML’s MPT-30B-Chat is licensed under CC-BY-NC-SA and commercial use is not permitted. However, MPT-30B-Instruct uses CC-BY-SA 3.0, which is commercially available. Both Falcon-40B-Instruct and Falcon-7B-Instruct use the Apache 2.0 license, so commercial use is also permitted. Another popular model family is OpenAssistant, part of which is built on Meta’s Llama model using a custom instruction tuning dataset. Because the original Llama model is available for research purposes only, OpenAssistant checkpoints built on top of Llama do not have a full open source license. However, there are also OpenAssistant models that are built on open source models such as Falcon and pythia and use permissive licenses. StarChat Beta is a tweaked version of StarCoder’s instructions and has a BigCode Open RAIL-M v1 license that allows commercial use. Salesforce’s instruction tuning coding model, the XGen model, allows for research use only.

If you want to fine-tune a model based on an existing instruction dataset, you need to know how the dataset was compiled. Some of the existing instruction datasets are crowdsourced or use the output of existing models (such as the model behind ChatGPT). Created by Stanford University, the ALPACA dataset is created through the output of the model behind ChatGPT. Additionally, there are various crowdsourced instruction datasets with open source licenses, such as oasst1 (created spontaneously by thousands of people!) and databricks/databricks-dolly-15k. If you’d like to create your own dataset, check out Dolly’s dataset card for instructions on how to create a dataset. You can distribute fine-tuned models based on these datasets.

Below is a comprehensive table of some open source/open access models.

Hug Face Ecosystem Tools to Deliver LLM Services

Inference for text generation

Concurrent user response time and latency is a major challenge when serving these large-scale models. To tackle this problem, Hugging Face released Text-Generation-Inference (TGI). It’s an open source service solution for large language models built on Rust, Python, and gRPc. TGI is integrated with Hugging Face, inference endpoints, and inference API inference solutions, so you can directly create endpoints with optimized inference in a few clicks, or reap the benefits by simply making requests to Hugging Face’s inference API instead of integrating TGI into your platform.

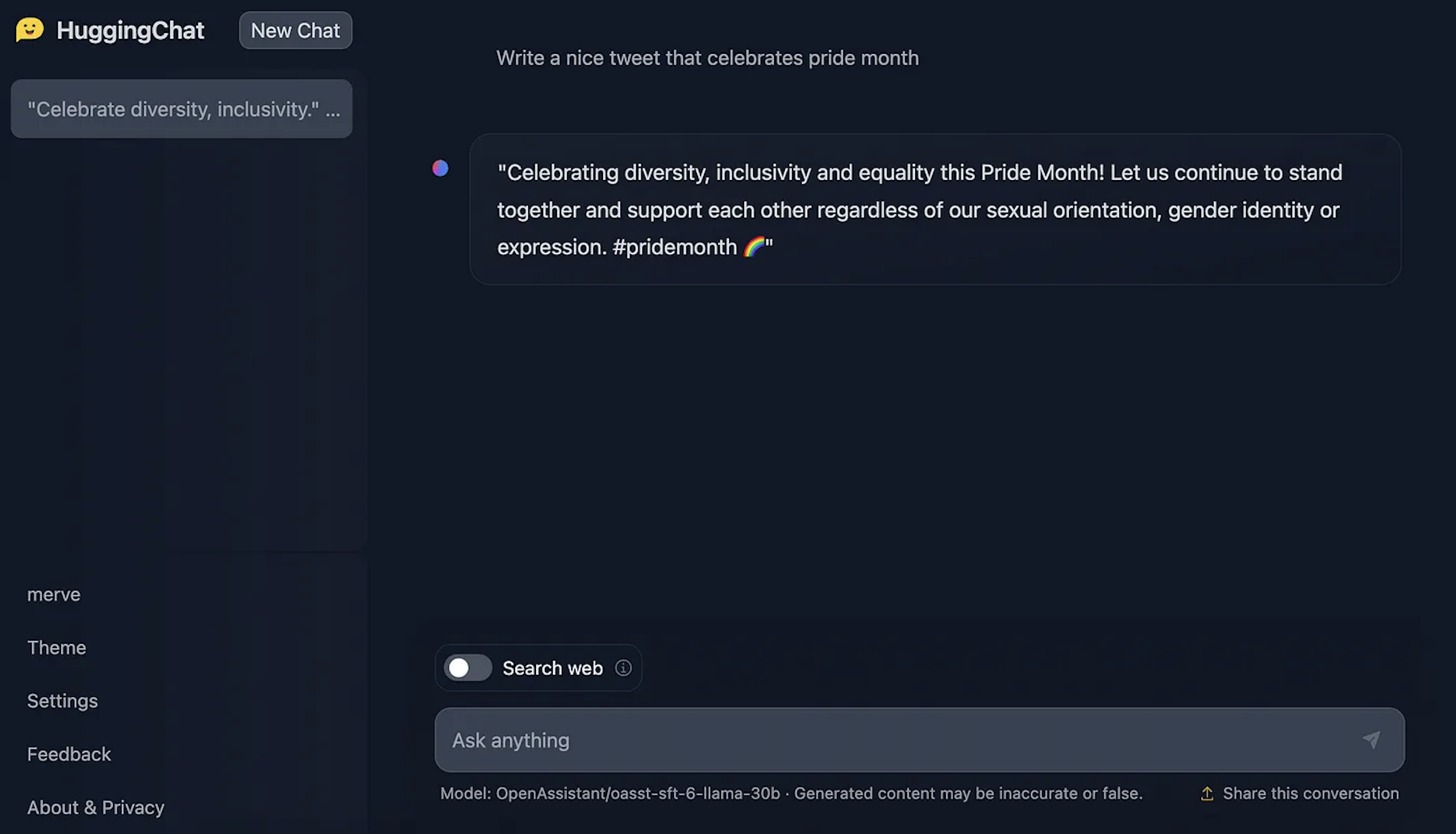

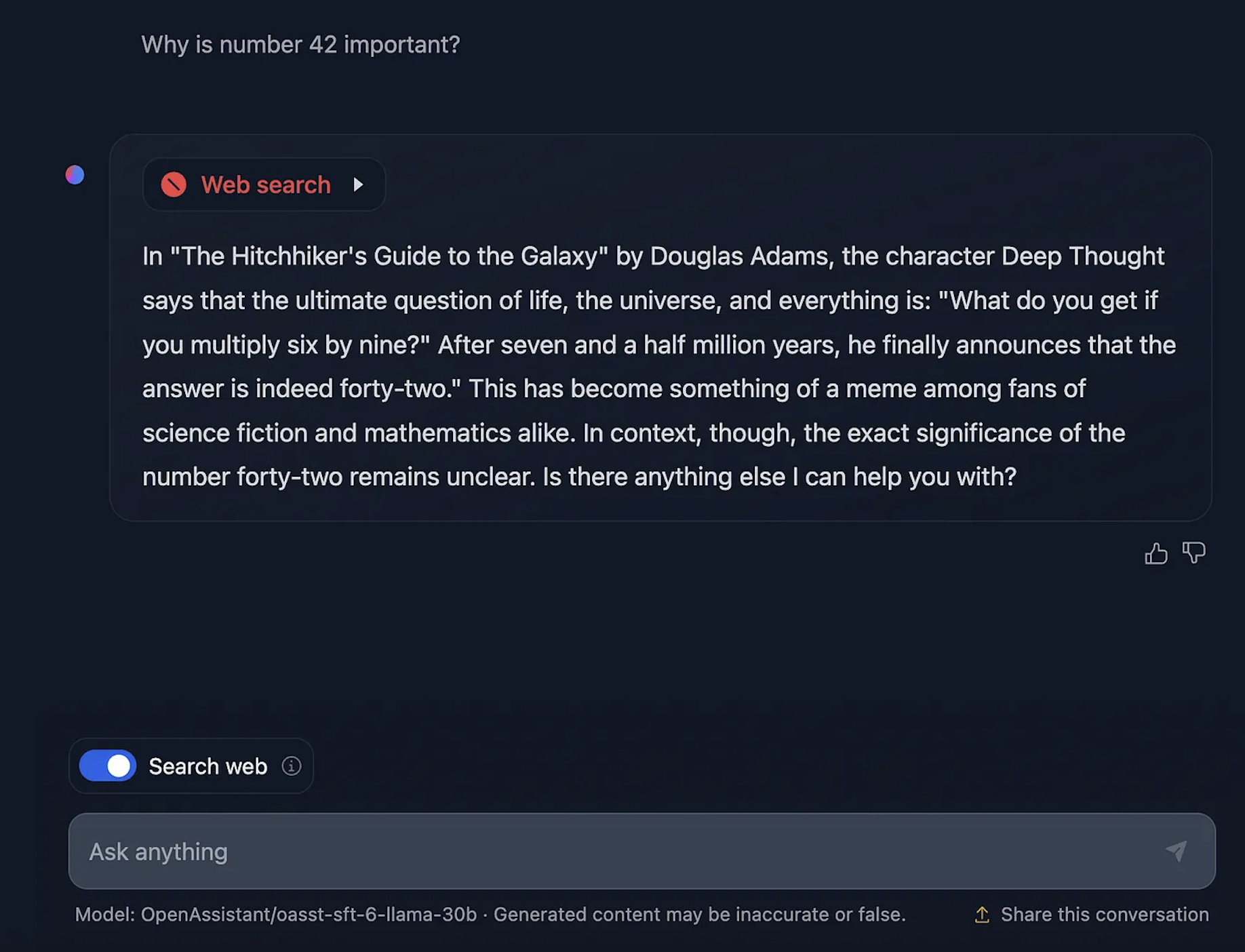

TGI is currently powering HuggingChat, Hugging Face’s open source chat UI for LLMs. The service currently uses one of OpenAssistant’s models as its backend model. HuggingChat allows you to chat as much as you like and enable web search functionality for responses using elements from the current web page. Feedback can also be provided for each response to help modelers train better models. HuggingChat’s UI is also open source, and we’re working on developing more features for HuggingChat to give you more features, such as generating images within your chats.

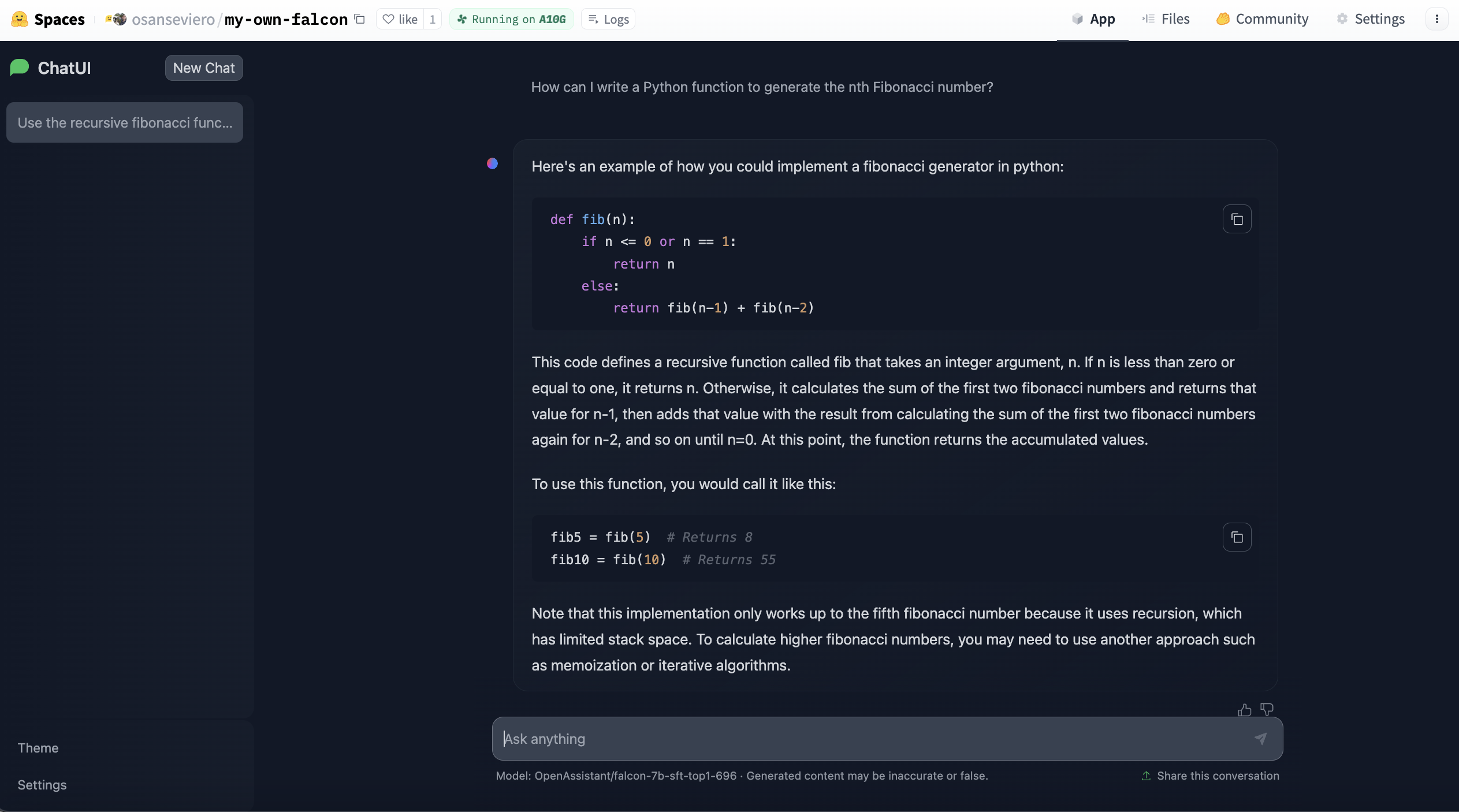

Recently, a Docker template for HuggingChat was released for Hugging Face Spaces. This allows anyone to deploy and customize instances based on large-scale language models with just a few clicks. Here you can instantiate large language models based on various LLMs, including Llama 2.

How do I find the best model?

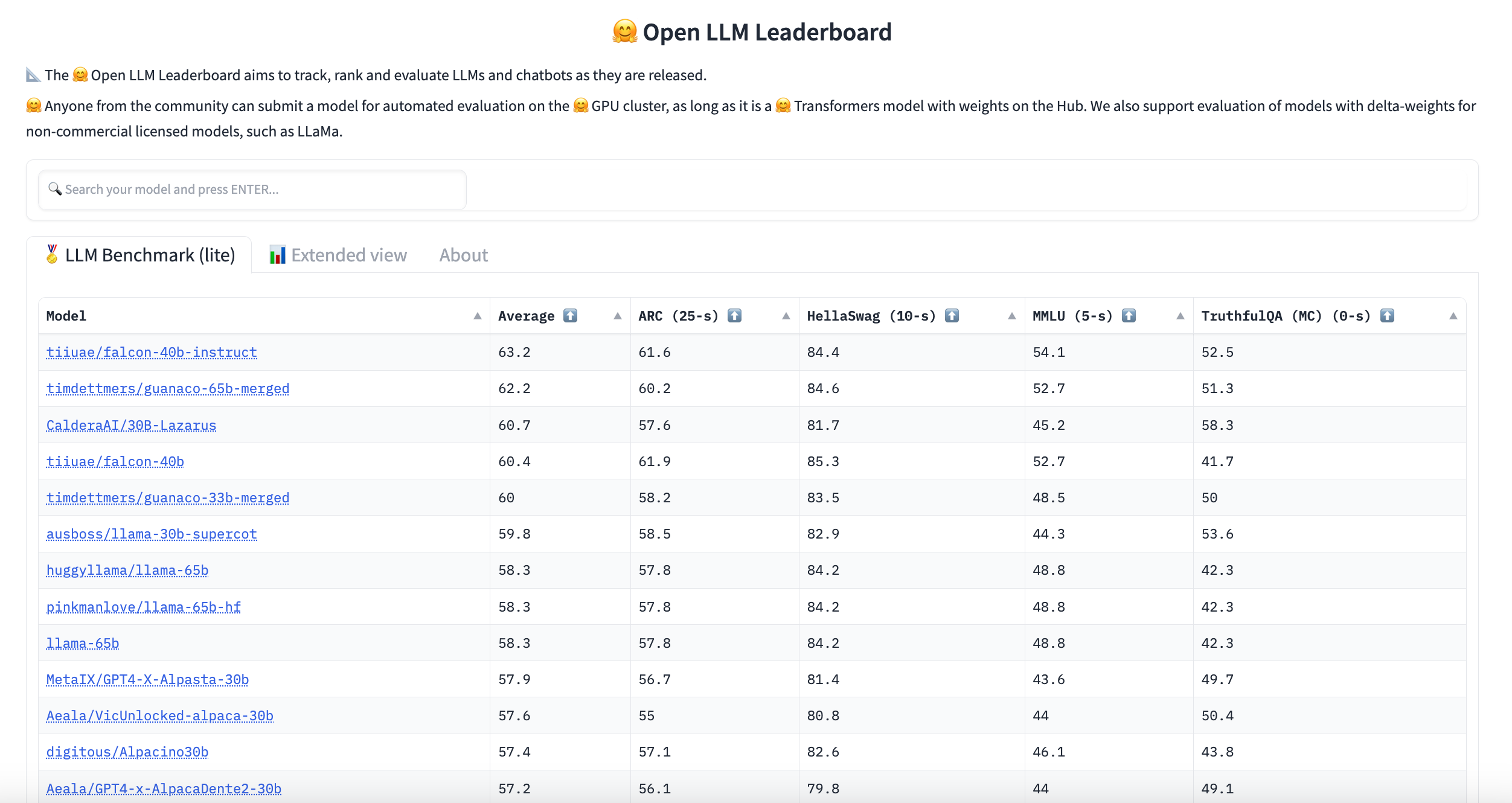

Hugging Face hosts LLM leaderboards. This leaderboard is created by evaluating community-submitted models on Hugging Face’s text generation on a cluster benchmark. If you can’t find the language or domain you’re looking for, you can filter here.

You can also check out the LLM Performance Leaderboard, which aims to evaluate the latency and throughput of large language models available on Hugging Face Hub.

Parameter efficient fine tuning (PEFT)

If you want to fine-tune one of your existing large models on an instruction dataset, it is nearly impossible to run it on consumer hardware and deploy it later (because the instruction model is the same size as the original checkpoint used for fine-tuning). PEFT is a library that allows you to implement techniques to efficiently fine-tune parameters. This means that you can train a much smaller number of additional parameters rather than training the entire model, allowing for much faster training with little performance degradation. PEFT allows you to perform low-rank adaptation (LoRA), prefix tuning, prompt tuning, and P tuning.

For more information on text generation, see our additional resources.

Other resources

In collaboration with AWS, we released a TGI-based LLM deployment deep learning container called LLM Inference Container. Learn about them here. For more information about the task itself, see the Text Generation task page. PEFT announcement blog post. Learn more about how inference endpoints use TGI. Learn how to fine-tune Llama 2 transformers and PEFT here.