![]()

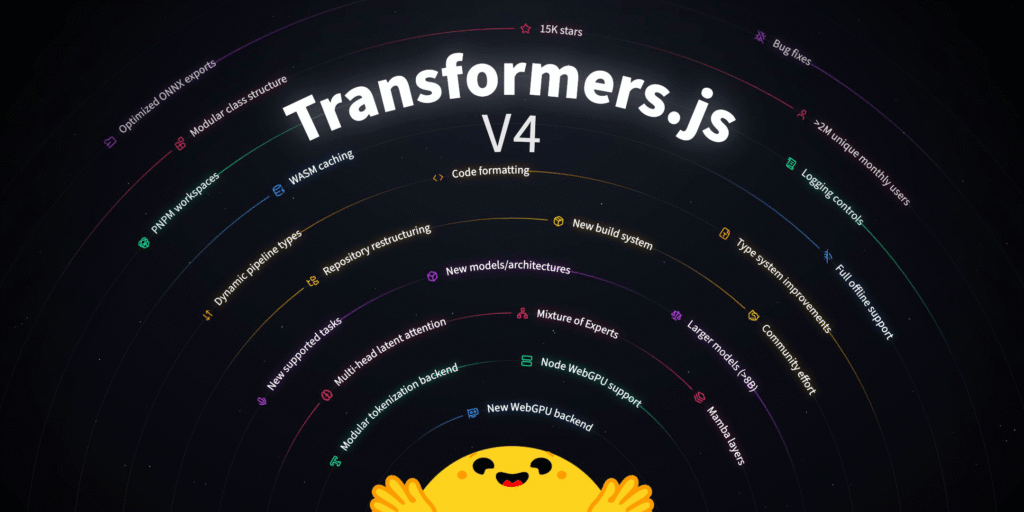

We’re excited to announce that Transformers.js v4 (preview) is now available on NPM. After nearly a year of development (started in March 2025 🤯), it’s finally ready to test. Previously, users had to install v4 directly from source via GitHub, but now it’s as easy as running a single command. npm i @huggingface/transformers@next

Until the full release, we will continue to publish v4 releases under the following tags on NPM, so expect regular updates:

Performance and runtime improvements

The biggest change is undoubtedly the new WebGPU runtime, which has been completely rewritten in C++. We worked closely with the ONNX runtime team to thoroughly test this runtime across nearly 200 supported model architectures and many new v4-only architectures.

In addition to improved operator support (performance, accuracy, and coverage), this new WebGPU runtime enables you to use the same transformers.js code in different JavaScript environments, including browsers, server-side runtimes, and desktop applications. That’s right, you can now run WebGPU-accelerated models directly on Node, Bun, and Deno.

We’ve proven that cutting-edge AI models can run 100% locally in the browser, and we’re now focused on performance, making these models run as fast as possible, even in resource-constrained environments. This required a complete rethink of our export strategy, especially for large language models. This is accomplished by leveraging specialized ONNX runtime Contrib Operators such as com.microsoft.GroupQueryAttendant, com.microsoft.MatMulNBits, or com.microsoft.QMoE to reimplement a new model for each operation to maximize performance.

For example, by employing the com.microsoft.MultiHeadtention operator, we were able to achieve up to 4x speedup over the BERT-based embedding model.

This update enables full offline support by caching WASM files locally in the browser, allowing users to run Transformers.js applications without an internet connection after the initial download.

Rebuilding the repository

Developing a new major version provides an opportunity to invest in the codebase and undertake long-overdue refactoring efforts.

PNPM workspace

Previously, GitHub repositories served as npm packages. This worked fine as long as the repository only exposed a single library. However, as we look to the future, we see the need for different subpackages that rely heavily on the Transformers.js core, addressing different use cases, such as library-specific implementations or small utilities that most users don’t need, but some do.

So I converted the repository to a monorepository using pnpm workspace. This allows you to ship small packages that depend on @huggingface/transformers without the overhead of maintaining a separate repository.

Modular class structure

Another major refactoring effort targeted the ever-growing models.js file. In v3, all available models were defined in a single file of over 8,000 lines, making maintenance increasingly difficult. In v4, we’ve broken this down into smaller, more focused modules with clear separation between utility functions, core logic, and model-specific implementation. This new structure improves readability and makes adding new models much easier. Developers can now focus on model-specific logic instead of navigating through thousands of lines of unrelated code.

sample repository

In v3, many Transformers.js sample projects existed directly in the main repository. In v4, we moved these into dedicated repositories, allowing us to maintain a cleaner codebase focused on core libraries. This makes it easy for users to find and contribute samples without having to explore the main repository.

more beautiful

Updated Prettier configuration and reformatted all files in the repository. This ensures consistent formatting across the codebase and all future PRs automatically follow the same style. No more arguments about formatting…Prettier takes care of everything, keeping your code clean and readable for everyone.

New model and architecture

Thanks to a new export strategy and expanded support for custom operators in the ONNX runtime, we were able to add many new models and architectures to Transformers.js v4. These include popular models such as GPT-OSS, Chatterbox, GraniteMoeHybrid, LFM2-MoE, HunYuanDenseV1, Apertus, Olmo3, FalconH1, Youtu-LLM, and more. Many of these required implementing support for advanced architectural patterns such as Mamba (a state-space model), multi-headed latent attention (MLA), and mixture of experts (MoE). Perhaps most importantly, all of these models are WebGPU compatible, allowing users to run them directly in a browser or server-side JavaScript environment with hardware acceleration. Watch exciting demos of these new models in action.

new build system

We migrated our build system from Webpack to esbuild and the results were amazing. Build times were reduced from 2 seconds to just 200ms, a 10x improvement and significantly faster development iterations. However, speed is not the only advantage. Bundle size also decreased by an average of 10% across all builds. The most notable improvement is the default export, transformers.web.js, which is 53% smaller, meaning faster download and startup times for users.

Standalone Tokenizers.js library

A frequent request from our users was to extract the tokenization logic into a separate library, and with v4 we’ve done just that. @huggingface/tokenizers is a complete refactoring of the tokenization logic, designed to work seamlessly between browser and server-side runtimes. At only 8.8kB (gzipped) with zero dependencies, it’s completely type-safe and incredibly lightweight.

See code example

import { tokenizer } from “@huggingface/tokenizer”;

constant Model ID = “Hug Face TB/SmolLM3-3B”;

constant Tokenizer Json = wait fetch(

`https://huggingface.co/${Model ID}/resolve/main/tokenizer.json`

).after that(less => resolution.json());

constant tokenizerConfig = wait fetch(

`https://huggingface.co/${Model ID}/resolve/main/tokenizer_config.json`

).after that(less => resolution.json());

constant Tokenizer = new tokenizer(tokenizerJson, tokenizerConfig);

constant token = tokenizer.tokenization(“Hello World”);

constant encoded = tokenizer;encode(“Hello World”);

This separation keeps the core of Transformers.js focused and lean, while providing a versatile standalone tool that any WebML project can use independently.

Other improvements

We’ve made several quality of life improvements throughout the library. The type system has been enhanced with dynamic pipeline types that adapt based on input, improving developer experience and type safety.

Logging has been improved to give users more control and provide clearer feedback while running models. Additionally, we added support for larger models with more than 8B parameters. In our testing, we were able to run GPT-OSS 20B (q4f16) on the M4 Pro Max at approximately 60 tokens per second.

Acknowledgment

We would like to express our sincere thanks to everyone who contributed to this major release, especially the ONNX runtime team for their great work on the new WebGPU runtime and support throughout development, and to all external contributors and early testers.