![]()

AI WebTV is an experimental demo that showcases the latest advances in automatic video and music compositing.

👉 Visit AI WebTV Space to watch the stream now.

If you’re using a mobile device, you can view your stream from your Twitch mirror.

concept

The purpose of AI WebTV is to demonstrate videos generated using open source text transformation models such as Zeroscope and MusicGen in a fun and accessible way.

These open source models can be found on the Hugging Face hub.

Individual video sequences are intentionally short. In other words, WebTV should be viewed as a tech demo/showreel rather than an actual show (with art direction and programming).

architecture

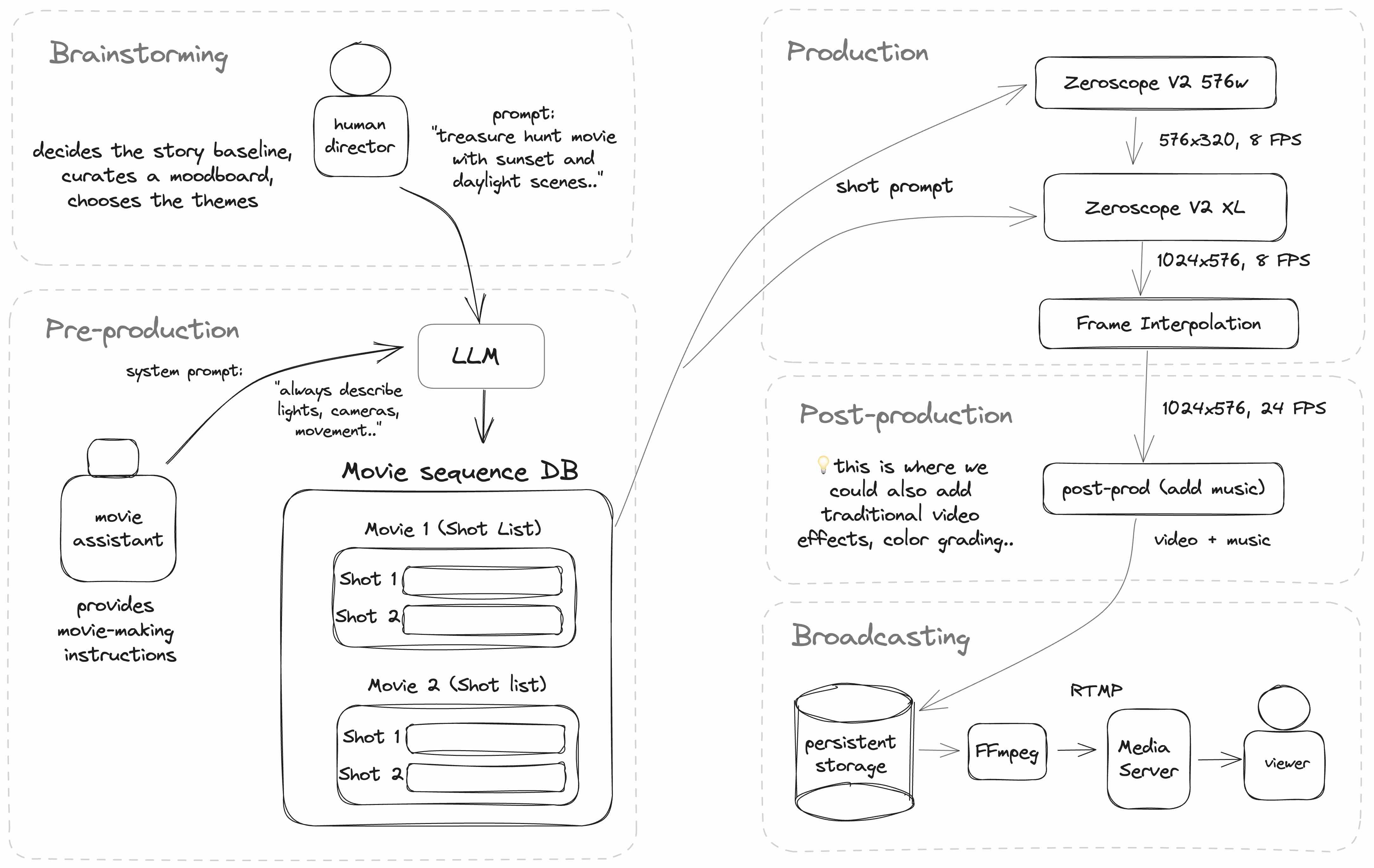

AI WebTV works by taking a series of video shot prompts and passing them through a text-to-video model to generate a series of takes.

Additionally, basic themes and ideas (written by humans) are passed to LLM (in this case ChatGPT) to generate different individual prompts for each video clip.

A diagram of AI WebTV’s current architecture is shown below.

Implementing the pipeline

WebTV is implemented in NodeJS and TypeScript and uses various services hosted on Hugging Face.

Text-to-video model

The core video model is Zeroscope V2, a model based on ModelScope.

Zeroscope consists of two parts that can be chained together.

👉 The same prompt should be used for both generation and upscaling.

call video chain

To create a quick prototype, WebTV runs Zeroscope from two cloned Hugging Face Spaces running Gradio, called using the @gradio/client NPM package. The original space can be found here.

Search for Zeroscope on the hub to find other spaces deployed by the community.

👉 Public spaces can be crowded and suspended at any time. If you want to deploy your own system, please clone these spaces and run them under your own account.

Using models hosted in spaces

Spaces using Gradio have the ability to expose a REST API, which can be called from Node using the @gradio/client module.

For example:

import {client} from “@gradio/client”

export constant generate video = asynchronous (prompt: string) => {

constant API = wait client(“*** Space URL ***”)

constant {data} = wait API.predict(“/run”(prompt,

42,

twenty four,

35

))

constant { orig_name } = data(0)(0)

constant remote URL = `${instance}/file=${orig_name}`

}

Post-processing

Once an individual take (video clip) is upscaled, it is passed to a frame interpolation algorithm, FILM (Frame Interpolation for Large Motion).

During post-processing, we also add MusicGen-generated music.

broadcast a stream

Note: There are multiple tools available for creating video streams. AI WebTV currently uses FFmpeg to read playlists consisting of mp4 video files and m4a audio files.

Here is an example of creating such a playlist.

import { promise as fs} from “fs”

import path from “path”

constant all files = wait fs.reading(“**Path to video folder**”)

constant allVideos = allFiles.map(file => path.participate(directory, file)).filter(file path => file path.ends with(“.mp4”))

Let me playlist = ‘ffconcat version 1.0\n’

All file paths.to each(file path => { playlist += “file”${file path}‘\n’

})

wait fs.promise.writing file(“Playlist.txt”playlist)

This produces the following playlist content:

ffconcat version 1.0 file “Video 1.mp4”

file “Video 2.mp4”

…

Next, we will use FFmpeg again to read this playlist and send the FLV stream to the RTMP server. Although FLV is an older format, it is still popular in the real-time streaming world due to its low latency.

ffmpeg -y -nostdin \ -re \ -f concat \ -safe 0 -i channel_random.txt -stream_loop -1 \ -loglevel error \ -c:v libx264 -preset Veryfast -tune zerolatency \ -shortest \ -f flv rtmp://

FFmpeg has various configuration options, please refer to the official documentation for more information.

For RTMP servers, open source implementations such as the NGINX-RTMP module can be found on GitHub.

AI WebTV itself uses node-media-server.

💡 You can also stream directly to one of the Twitch RTMP entry points. See Twitch documentation for more information.

observations and examples

Here are some examples of generated content.

The first thing you’ll notice is that applying the second pass of Zeroscope XL significantly improves the quality of your images. The effect of frame interpolation is also clearly visible.

Characters and scene structure

Dynamic scene simulation

What’s really appealing about Text-to-Video models is their ability to emulate the real-world phenomena they were trained on.

We’ve seen this with large-scale language models and the ability to synthesize compelling content that mimics human responses, but applying this to video takes it to a whole new dimension.

A video model predicts the next frame of a scene containing moving objects such as fluids, people, animals, and vehicles. Currently, this emulation is not perfect, but it will be interesting to evaluate future models (trained on large-scale or specialized datasets, such as animal locomotion) for their accuracy in reproducing physical phenomena and their ability to simulate agent behavior.

💡 It would be interesting to see these features explored further in the future, including training video models on large video datasets covering more phenomena.

styling and effects

Failure example

Wrong orientation: Sometimes there are problems with the movement or orientation of the model. For example, here the clip appears to be playing backwards. Also, the modifier keyword green was not considered.

Rendering errors in realistic scenes: You may see artifacts such as vertical lines or wave motion. The cause is unknown, but it may be due to the combination of keywords used.

Text or objects inserted into images: The model may insert words into the scene from prompts such as “IMAX.” Mentioning “Canon EOS” or “Drone footage” in the prompt may cause those objects to appear in your video.

In the following example, the word “llama” inserts a llama, but you can see that the word “llama” appears twice in the flame.

Recommendations

Here are some initial recommendations based on our observations so far.

Using video-specific prompt keywords

As you may already know, if you don’t use stable diffusion to prompt certain aspects of your image, things like clothing color or time of day can be random or assigned common values like neutral daylight.

The same applies to video models. You need to be specific about things. Examples include camera and character movement, orientation, speed, and direction. It can be left unspecified for creative purposes (idea generation), but this may not always produce the desired result (e.g. entities being animated in reverse).

Maintain consistency between scenes

If you plan to create a sequence of multiple videos, be sure to add as much detail as possible to each prompt. Otherwise, important details such as color may be lost from one sequence to another.

💡 This also improves the quality of your images, as the prompts are used for the upscaling part of Zeroscope XL.

Take advantage of frame interpolation

Frame interpolation is a powerful tool that can repair small rendering errors and turn many defects into features, especially in scenes with a lot of animation or where cartoon effects are acceptable. The FILM algorithm smooths elements of frames that include preceding and following events in a video clip.

This is great for moving the background as the camera pans or rotates, and also gives you creative freedom, such as controlling the number of frames after generation to create slow-motion effects.

Future initiatives

We hope you enjoy the AI WebTV stream. I also hope it inspires you to build further in this area.

Since this was a first attempt, many things were not the focus of the tech demo. Generate longer and more diverse sequences, add audio (sound effects, dialogue), generate and adjust complex scenarios, and give language model agents more control over your pipeline.

Some of these ideas may make it into future updates to AI WebTV, but we’re also excited to see what our community of researchers, engineers, and builders come up with.