We recently published inferences for Pro, a new product that will make larger models accessible to more audiences. This opportunity opens up new possibilities for running end-user applications using faces to hug as platforms.

An example of such an application is AI Comic Factory. This is a space that has proven to be extremely popular. Thousands of users have created their own AI comic panels to foster their own community of regular users. They share their work, and even share it with some opening pull requests.

This tutorial shows you how to fork and configure an AI Comic Factory to avoid long waits and deploy it to your own private space using the inference API. Although no strong technical skills are required, knowledge of APIs, environment variables, LLMS and general understanding of stable diffusion is recommended.

Get started

First, make sure you sign up for a Pro Hugging Face account. This allows access to the Llama-2 and SDXL models.

How AI Comic Factory works

AI Comic Factory is a little different from other spaces that hold your face. It’s a NextJS application deployed using Docker, based on a client-server approach, and two APIs need to work.

Language Model API (currently llama-2) Stable diffusion API (currently SDXL 1.0)

Duplicate Spaces

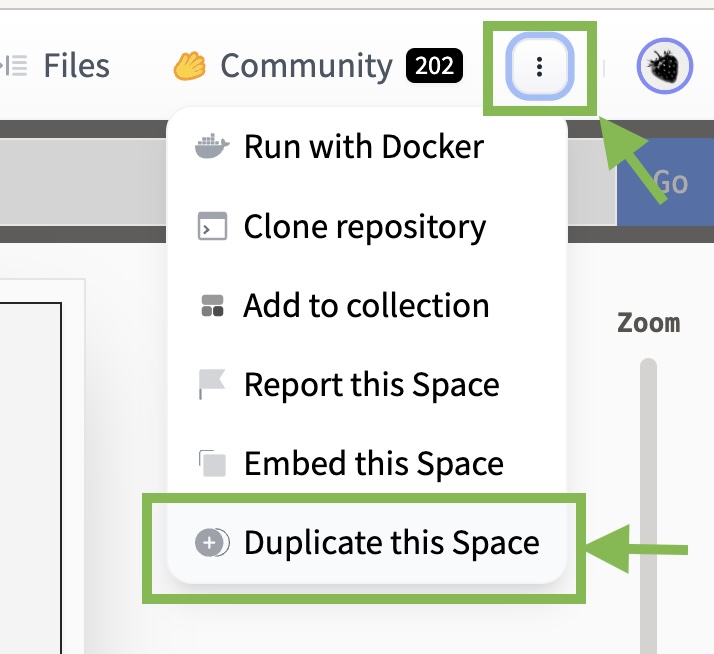

To replicate the AI Comics Factory, go to Space and click Duplicate.

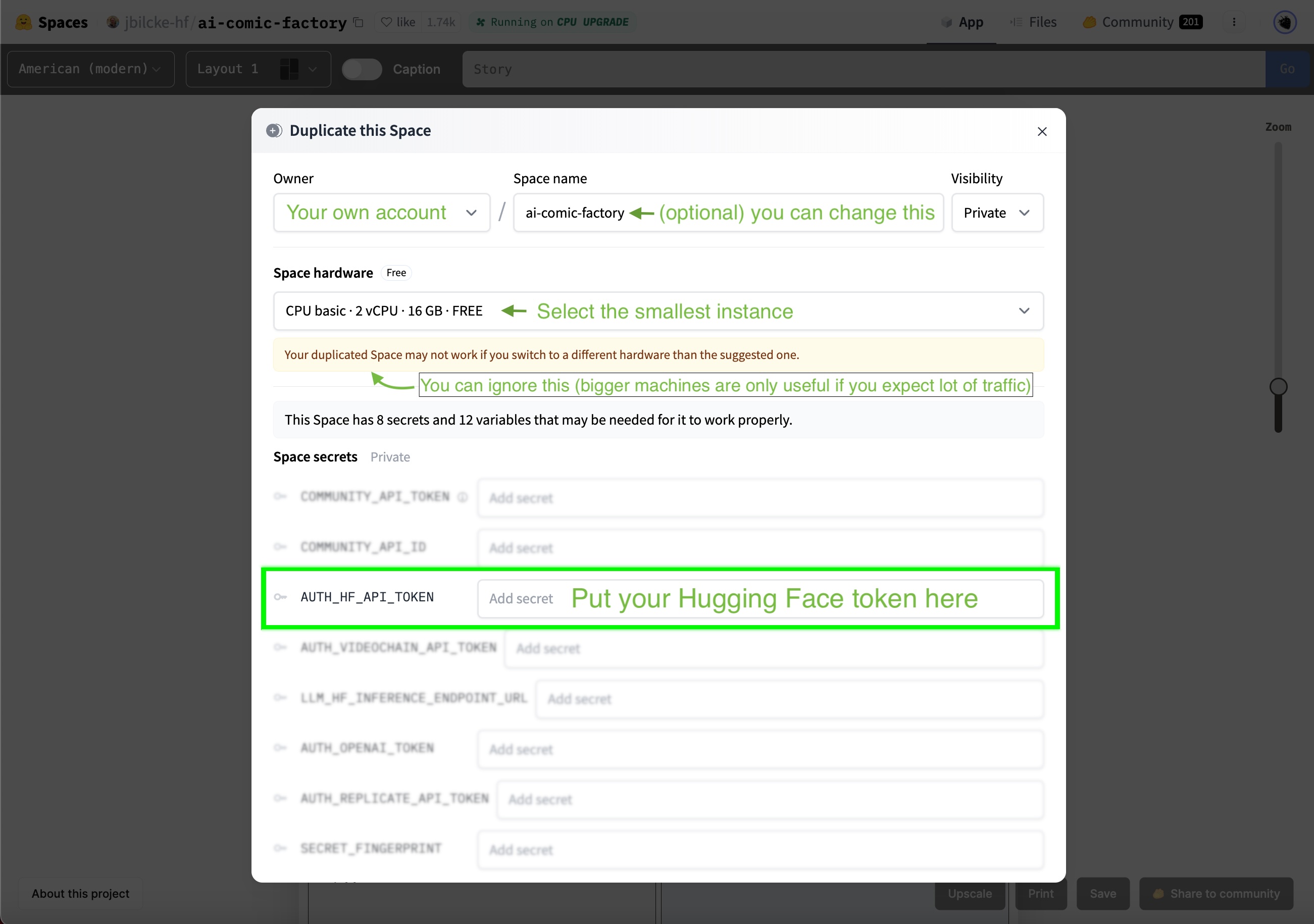

You will see that the space owner, name, and visibility have already been entered. So you can leave these values intact.

Copying spaces runs inside a Docker container that doesn’t require much resources, so you can use the smallest instance. The official AI Comic Factory Space utilizes larger CPU instances to accommodate a larger user base.

To run an AI comics factory under your account, you will need to configure a hugging face token.

Selecting LLM and SD Engines

AI Comic Factory supports a variety of backend engines that can be configured using two environment variables.

Configure the rendering_Engine image generation engine (possible values are Inference_API, Inference_Endpoint, OpenAI) to configure the LLM_ENGINE language model (possible values are Incrence_API, Inference_Endpoint, Replicate, VideoChain).

Both should be set to Inference_API, as they are focused on getting AI Comic Factory to work with the Inference API.

You can find more information about alternative engines and vendors in the README and .ENV configuration files for your project.

Model configuration

AI Comic Factory is pre-configured with the following models:

llm_hf_inference_api_model: Default value is metalama/llama-2-70b-chat-hf rendering_hf_rendering_inference_api_model: Default value is stabilizeitai/stable-diffusion-xl-base-1.0

Your Pro Hugging Face account already has access to those models, so there’s nothing to do or change.

Going further

Support for the AI Comic Factory inference API is in the early stages, with some features still not ported, such as using SDXL’s Refiner steps and using upscaling implementations.

Nevertheless, we hope that this information will start branching and adjusting the AI Comics factory to suit your requirements.

Experiment and try other models in the community, and have a happy hack!