If you’re at all aware of the zeitgeist, you’ve probably heard the term “AI slop.” This is a general disdain for just about anything produced by “artificial intelligence,” as if the involvement of AI makes the product questionable or even dangerous.

It’s easy to say that AI slop is everywhere. “AI slop is clogging our brains,” NPR warns.

The problem with this complaint is that very few people try to define “AI slop.” Is it made by AI? Or is such content appearing on social media? AI images created for commercial advertising? Is such content created to deceive? Or is it just content created for the purpose of politically deceiving?

Election disinformation has existed throughout our history, but generative AI increases the risk.

— Brennan Center for Justice

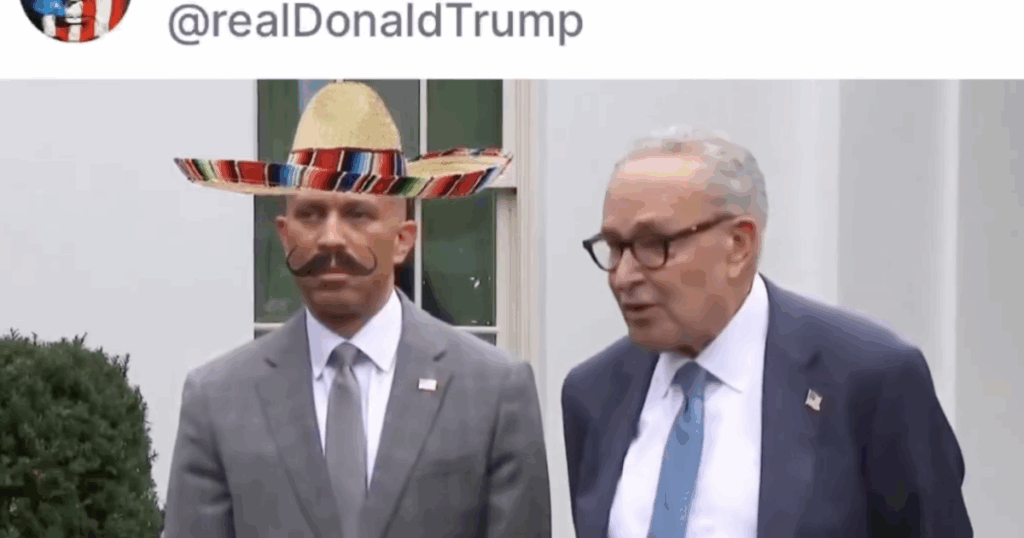

Clearly, what we need is a taxonomy of AI slops. Which symptoms are just a nuisance, which are actually funny, and which are a threat to social or political order. These range from jokingly obvious hoaxes to the use of AI to put words in politicians’ mouths to sway voters through deception.

So it’s time to really evaluate the AI slop. Which are deceptive, which are funny, and which are just avoidable nuisances.

It’s certainly true, as my colleague Nilesh Chistopher has reported, that tools like OpenAI’s Sora video app have reduced the cost of producing unauthorized deepfakes of celebrities, dead people, and copyrighted characters to near zero. The rapid uptake of Sora by users is “eroding confidence in our ability to tell the difference between real and synthetic products,” one expert told Christopher.

But it’s the higher level development that causes real concern. Much of the frivolous discussion around AI focuses on seemingly ubiquitous but innocuous topics.

For example, critic and blogger Ted Gioia says of AI-generated art: “Slop art is flat, clumsy, corny, listless, and often ridiculous. Slop works are celebrated for their silliness and clumsiness, which is often amplified by their bizarre juxtaposition of cultural memes.”

Gioia’s purpose was to analyze the “new aesthetics of slop,” but what impresses me about his critique is its approachability. If you replace “slop art” with “pop art” or “op art,” you might feel like you’re reading a screed by a mainstream critic from the 1950s or 60s, such as Clement Greenberg.

This is not to say that the creators of AI-generated images are artists with the dedication and talent of earlier figures, it’s just that each era provides us with creative environments that many people find boring, stupid, clumsy, etc.

It is not uncommon for new forms of expression to emerge as technology advances. “Every media revolution produces trash and art,” writes Scientific American’s Denis Elise Bechar. “Spam, fluff, clickbait, churnalism, kitsch, vulgarity. These are all ways to describe mass-produced, low-quality content.”

Béchard provides a very long historical perspective on this phenomenon. First, he argues that Gutenberg’s invention of movable type, or “ChatGPT in the 1450s,” ushered in “the mass production of cheap printed matter.” The early 1700s brought us “Grub Street,” cheap printed material that included “satire, political pamphlets, sensational articles, and hack journalism.” …While some of this material was certainly tongue-in-cheek, much of it entertained and educated the public.

The tidal wave of AI certainly reminds us that, as the book of Ecclesiastes says, “there is nothing new under the sun.”

Some hand wringing seems like overkill. Coca-Cola is facing a backlash over its new artificial intelligence-generated commercial that depicts woodland creatures gathering to celebrate a convoy of trucks delivering Coke holiday supplies around the world.

Tech website CNET claims that the ubiquity of this ad is “a symptom of a larger problem,” but it’s not entirely clear what it thinks the problem is. The company’s complaints include that the AI-generated images have flaws that a human animator would have discovered, and that Coke has failed to address “customer aversion to AI.”

However, I haven’t seen much evidence of general public “disgust” towards AI in commercials. In any case, Coke includes a caption at the beginning of the commercial acknowledging that it was created by AI.

“Human creativity remains at the core of Coca-Cola advertising, and we are committed to using AI as a human enabler where it makes sense,” Coca-Cola’s head of AI, Pratik Thakar, said in an internal web post in response to questions about its use of AI.

It is true that relying on AI can reduce the cost and time required to produce certain content. This represents a loss of jobs for creative professionals, and this issue concerns Hollywood actors, writers, artists, and others in the journalistic community, where factual errors created by AI are a serious problem.

But as AI production permeates the commercial space, it becomes increasingly realistic, and if there is any concern that viewers will be fooled into thinking what they see on screen is real, that seems unlikely. It’s doubtful that viewers would think an image of an ostrich driving a delivery truck was real, any more than they thought a polar bear hugging a Coke bottle was real in the 1990s, when Coke ads exploited computer-generated imagery.

This does not mean that AI slop is always harmless. What we should be most concerned about is propaganda, or more precisely, political propaganda. In the run-up to the 2024 election, AI-generated partisan deepfakes have proliferated on the web.

One was a video purporting to show President Biden disparaging transgender women. “You’ll never be a real woman,” the deepfaked Biden says. “You have no uterus, no ovaries, no eggs. You are a homosexual who has been distorted by drugs and surgery into a crude mockery of natural perfection.” The video ends with the word “Biden,” implying that suicide may be an inevitable option for the women.

The video was so crude that it was quickly exposed as fake. Even conservatives sounded the alarm. PJ Media’s Matt Margolis wrote, “The audio fits Joe Biden’s voice and cadence perfectly (apart from the usual stumbles in his speeches), but the mouth movements are far from convincing.” “Nevertheless, what’s really frightening here is the stunning realism of the deepfake Biden’s voice. Without the accompanying video, it would be easy to conclude that the speech was legitimately spoken by Joe Biden, raising great concerns about how this technology could be used for nefarious purposes.”

Another deepfake was posted by Florida Governor Ron DeSantis’ presidential campaign. The move was an attempt to connect Donald Trump, whom Mr. DeSantis was challenging for the Republican nomination, with Dr. Anthony Fauci, the government epidemiologist whom Mr. DeSantis had sought to demonize as the perpetrator of mandatory coronavirus vaccination. The deepfake showed Trump hugging Fauci.

The DeSantis campaign, which would soon collapse, defended the video by saying it was just a social media post and not an official campaign ad. Twitter, where the video was first posted, eventually added an AI label indicating it was a fake.

Deliberate deception is often the path to victory in the heat of an election campaign, and there’s a good chance that AI-generated fakes will find their mark. The Brennan Center for Justice at New York University School of Law warned last year that “election disinformation has existed throughout history, but generative AI increases the risk.” “This changes the scale and sophistication of digital fraud and heralds a new language of technical concepts related to detection and authentication that voters must now grapple with.”

When the latest cycle of AI technology was in its early stages, deepfakes were relatively easy to identify. A widely published guide to spotting counterfeits was based on the technology’s inability to do certain things correctly, producing images such as hands with six fingers, unnatural-looking hair and feet, and shadows falling in the wrong places.

As technology advances, these artifacts will disappear, increasing the likelihood of misleading would-be detectives trying to uncover AI-generated fakes, the Brennan Center noted. Last year, the company published advice on how to spot fakes in the modern AI era. It looks for “authoritative context from trusted, independent fact-checkers” and “approaches emotionally charged content with critical scrutiny, as such content can impair judgment and leave people vulnerable to manipulation.”

Of course, purveyors of political deepfakes aim to block these very important functions, so advising people not to be fooled doesn’t seem like an effective safeguard, especially when voters are inundated with campaign waste ahead of an election.

Over time, the AI slop itself can become your biggest enemy. AI-generated entertainment content has failed to attract audiences beyond the most common content consumers. Even in such cases, AI content is essentially an imitation of human creation. That happened last month when an AI-generated country song topped Billboard’s country digital sales chart. However, the song’s vocal style and other creative elements were taken from a real artist, Blanco Brown, who had no idea about its creation.

This suggests that the cause of AI slop’s demise may be boredom rather than consumer disgust. The goal of entertainment content is for viewers to connect with human creativity, something that AI cannot replicate except superficially.

If this technology becomes so cheap that anyone can use it, it could lead to a wave of lower-quality noodles. The challenge will be to sort the harmless junk from the truly dangerous slopes.