In a move that could place the artificial intelligence regulatory framework behind Claude Chatbot, a San Francisco-based AI startup, it threw weight behind California’s SB 53, a bill aimed at impose transparency and safety measures on powerful AI systems. The support, released Monday, is announced as it tackles an increase in calls for surveillance amid rapid advances in AI technology. According to a report by TechCrunch, humanity described the law as providing a “strong foundation for managing powerful AI systems” through transparency rather than forceful technical control.

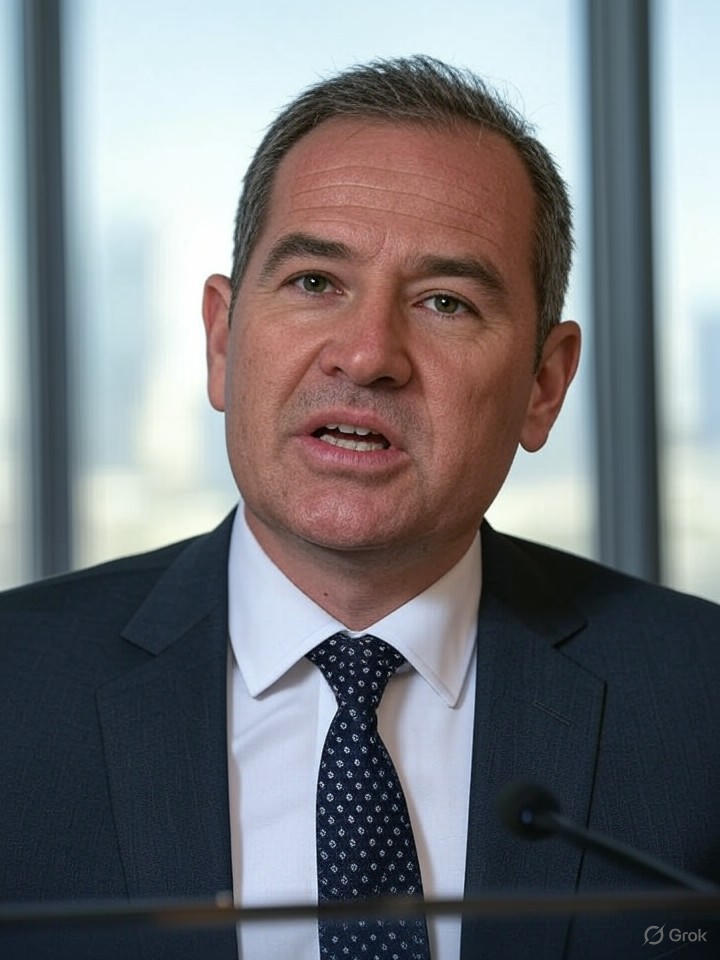

The bill, written by state Sen. Scott Wiener, requires that large AI companies be able to detail how to manage catastrophic risks, publish public transparency reports before deploying new models, and develop and publish safety frameworks to report critical safety incidents to the state. This approach marks a scaled version of the previous effort following the veto of more ambitious SB 1047 Governor Gavin Newsom last year, which had sought stricter regulations on AI development.

Changes in industry dynamics

Human support is in stark contrast to widespread opposition from many Silicon Valley, where people like venture capitalist Mark Andreesen criticize overreach and similar measures that could curb innovation. Previous Twitter posts on X highlighted the gap and some users praised their support as a practical step towards responsible AI governance. As detailed in the Politico article, support from major AI labs like humanity is worth $183 billion after the recent $13 billion funding round, but could affect Newsom’s decision on whether to sign the law on SB 53.

This is not the first foray into human policy advocacy. The company has previously highlighted safety research and, as mentioned in a previous TechCrunch report, has unveiled a custom AI model for US national security customers. By supporting SB 53, humanity is positioned as a supporter of measured regulations and could set precedents for other frontier AI companies such as Openai and Google.

Broader implications for AI regulations

Critics argue that, while not stricter than its predecessor, SB 53 still imposes burdensome reporting requirements that could slow AI progress in a highly competitive global market. The article on StartupNews.fyi points out that while federal officials also oppose state-level AI safety efforts and fear fragmented regulations across the United States, advocates, including safety advocates, reflect the bill that considers it a blueprint for national standards and highlights the possibility of emphasizing the responsibility of technology in their online analysis of American Baza.

The timing of human support is remarkable, arriving amid a gust of California’s AI-related laws. Newsom recently reviewed 38 bills and rejected some while signing the law to others, as outlined in last year’s TechCrunch summary. For industry insiders, this development highlights mature debate. It balances with innovations in protection against risks such as AI-enabled misinformation and autonomous weapons.

I’m looking forward to the implementation challenges

If signed, SB 53 requires companies like humanity to publicly disclose their risk management strategies. This is a driving force for transparency that aligns with the company’s own emphasis on ethical AI development. However, enforcement remains an important issue and could be a legal challenge from the enemy, which they consider to be a regulatory overload. Insights from Transformers News suggest that despite intense lobbying, the bill enters its final stage and positions California as a leader in AI governance.

Ultimately, Anthropic’s move could encourage other players to engage constructively with policymakers and advance the path of cooperation. As AI capabilities expand, such support may prove crucial in establishing norms that prioritize safety without hindering technical breakthroughs.