Current AI benchmarks are struggling to accommodate the latest models. Just like measuring the performance of a model on a particular task, it can be difficult to know if a model trained with internet data actually solves the problem or remembers the answers you have already seen. When a model reaches close to 100% on a particular benchmark, it is also less effective in revealing meaningful performance differences. We continue to invest in new, more challenging benchmarks, but the general path to intelligence requires us to continue looking for new ways to assess. The recent shift towards dynamic, human-judged testing solves these problems of memorization and saturation, but the result is new difficulties caused by the inherent subjectivity of human preferences.

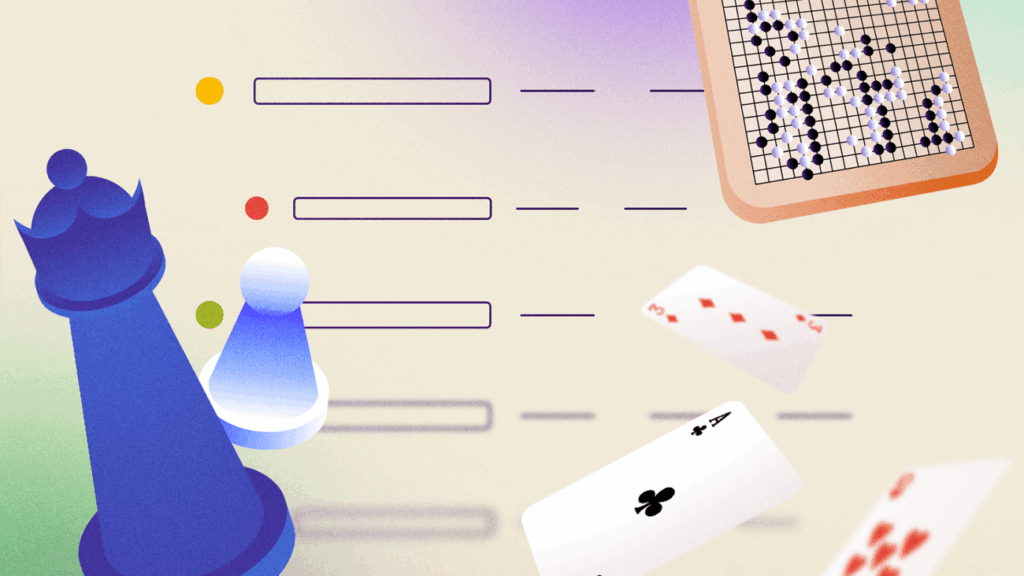

We continue to evolve and pursue current AI benchmarks, but we are also consistently considering testing new approaches to assessing models. That’s why today Kaggle Game Arena: AI Models introduces new public AI benchmark platforms that compete head-on in strategic games, offering verifiable and dynamic features.