We have heard this message loud and clear over the past few weeks. The Trump administration and its allies in Congress want to prevent states from regulating artificial intelligence, arguing there needs to be “one rule” nationwide.

The question is, can they actually do it?

Even though they have fervently pushed the argument that their latest effort should be considered a serious effort, it is certainly not due to an executive order alone. But even on the legislative front, there is little evidence of consensus at the federal level about what the rules governing AI systems should be, and the proposals currently being considered include a set of many different, sometimes overlapping and intersecting rules, rather than a single standard. These are complex issues that require a multifaceted policy approach, as we have seen this year in California, where the Legislature passed more than a dozen new laws in this area.

The reality is that states have been (and continue to be) at the forefront of technology policy issues related to privacy, online safety, and AI surveillance for the past decade. The Trump administration and a handful of prominent Republicans are trying to block state legislation on these important issues, including AI. It failed in June and failed again in December. We will continue to fight this battle and hope they continue to lose.

What we are seeing in Washington this week is an escalation of a fight that has been brewing for years. Ever since Californians adopted a landmark privacy law through a ballot initiative in 2020, Big Tech companies have complained about the patchwork of state laws. Additionally, a major focus of debate over federal privacy law in recent years has been preemption. But those consultations were bipartisan, and the proposal aimed to establish federal standards for privacy while preserving states’ authority outside of certain limits.

Over the past five years, states have become increasingly active in addressing technology policy issues in the absence of federal leadership. This is an advantage of a federalist system. States serve as laboratories of democracy and advance policy despite federal government inaction.

Over the past two years, as the deployment of general-purpose AI models and chatbots has accelerated, states have begun to focus on the risks and the need for transparency, oversight, and accountability to address the harm these systems can cause. This includes laws passed this year and last in California, Colorado, Utah, and several other states. Countries are identifying new issues and future risks and working to address them through new policies. This indicates that the system is functioning properly.

The proposals we’ve seen this year are different. Both the White House and Congressional leaders in the House of Representatives are pushing for drastic preemptive measures that would completely block states from enacting or enforcing technology regulations. An unprecedented reversal of our federalist structure is needed, they argue, to “remove barriers” to AI “leadership.” But there is no evidence that AI companies are unable to comply with existing laws or (thanks to the hundreds of millions of dollars they poured into PACs this year) unprepared to make their voices heard in national policy debates.

Sen. Ted Cruz’s (R-Texas) proposal in this summer’s budget to ban Starr’s AI law for 10 years was the first sign of how preemption strategies have changed. The provision, which would have stripped states of funding for enforcing AI regulations, was rejected by 99 of her fellow senators after an amendment by Republican Sen. Marsha Blackburn of Tennessee. Although AI companies lost that battle, they were clearly gearing up for a larger war against nations.

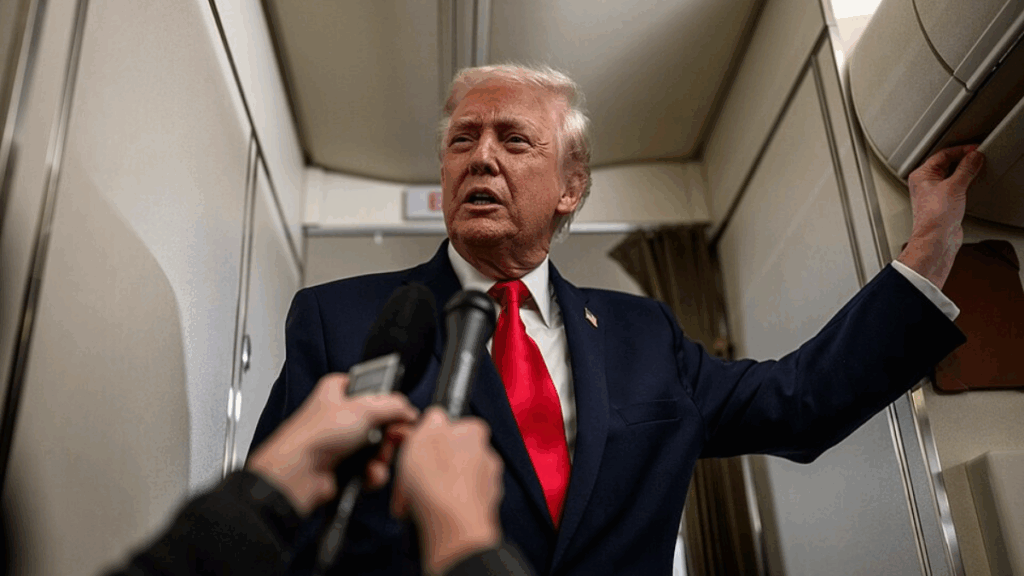

Mr. Cruz returned to the issue in September, insisting that his idea of a moratorium was “not dead at all.” The big news last month was that House Majority Leader Steve Scalise (R-Louisiana) and President Donald Trump himself endorsed that approach. Scalise said he intends to include a version of the moratorium in the annual National Defense Authorization Act (NDAA), which Congress is expected to pass by the end of the year. However, it quickly became clear that the effort lacked support from both the bipartisan leaders who drafted the NDAA and the broader Senate. Prominent Republican governors also opposed the idea.

With no clear path in sight for Congress to pass a ban on state AI policymaking, a new draft executive order titled “Eliminating State Law Interference with National AI Policy” has emerged. To be clear, the president does not have the power to “repeal” state laws or prohibit states from regulating AI.

So what should we think about this executive order?

Of note is the White House’s tacit admission that the moratorium bill remains doomed. In other words, this is the administration’s attempt to do something through executive action since Congress cannot pass its own AI moratorium proposal. But what actions could the president actually take, or direct federal agencies to take, that could impede state policymaking? There aren’t many.

The executive order signed Thursday directs the Commerce Department to create a “list” of state AI laws that are “inconsistent” with policy goals and then issue “a policy notice specifying the conditions under which each state will be eligible for residual status[of federal broadband funding].” Under this policy, states on this commerce list would be “ineligible to receive non-deployment funds to the fullest extent permitted by federal law.”

Does federal law permit the Department of Commerce to unilaterally impose such limits on broadband funding despite Congressional appropriations? Although not specified in the Executive Order, we can assume that this is not the case since the primary purpose of the Cruz bill was to impose such limits through legislation.

The order then asks the Federal Communications Commission to “begin the process of determining whether to adopt federal reporting and disclosure standards for AI models that preempt conflicting state laws.” However, because the FCC’s authority is limited to certain categories of communications service providers and its regulatory scope is limited to those categories, the FCC does not have the legal authority to impose such standards on AI companies.

The order also directs the Federal Trade Commission to issue a “policy statement” on preemption related to the Federal Trade Commission’s unfair and deceptive trade practices authority, focusing on “state laws that require changes to the truthful output of AI models.” No such state law exists, and even if it did, it would not be preempted under the FTC’s statutory authority.

Finally, the order directs the president’s advisors to develop “legislative recommendations to establish a uniform federal policy framework on AI” that would serve as a vehicle to pre-empt conflicting state AI laws. But that’s easier said than done.

As national policymakers and many stakeholders in industry and civil society have attested, establishing uniform standards for AI (or any technology) is neither simple nor easy. Indeed, the executive order implicitly acknowledges the complexity of this project by including an incomplete list of preemption carve-outs, ending with the ominous “(iv) Other topics to be determined.”

Mr. Trump and Mr. Cruz’s efforts to suspend state AI laws without even outlining their proposed alternatives reverse the most fundamental principles of regulating complex systems. That means starting small, iterating, learning, and continuing to see what works and what doesn’t.

Despite the lack of momentum for AI standards in Congress, efforts to preempt state laws will remain a focus in the House, as the Energy and Commerce Committee moves to advance several preemptive bills.

The committee held a markup this week to consider 18 different “online safety” bills. Notably, this increase includes amended versions of several bills that received bipartisan support in the Senate, including the Kids Online Safety Act (KOSA) and the Children and Youth Online Privacy Protection Act (COPPA 2.0). Both of these revised bills are significantly weaker than the Senate version, with sweeping preemption provisions that would wreak havoc on existing state privacy and online safety laws. Even if House Republican leaders are able to pass these bills, it is unclear whether they will move forward in the Senate.

But what is the potential impact of the executive order and House bill on existing and future state policy activities next year? The order does not (and cannot) directly preempt any state law. However, the order also calls on the attorney general to create an “AI Litigation Task Force… whose sole responsibility is to challenge state AI laws.” What does that actually mean?

The three legal concepts mentioned in the Executive Order Working Group’s provisions include the Interstate Commerce Clause, preemption of existing statutes, and “other unlawful acts.” However, these are all classic examples of legal defenses used by a defendant accused of violating state law (or may form the basis for an affirmative injunction against future enforcement by that person).

The United States (via the Department of Justice) has no standing or cause of action to challenge state laws as currently enacted. Therefore, the Department of Justice will most likely join or intervene in future lawsuits raising these claims, as it did in a lawsuit challenging California’s emissions standards earlier this year. In that role, the Department of Justice may support existing claims by companies that challenge certain state laws or enforcement actions, but it does not have special legal authority or authority to influence a particular court’s analysis of those issues. The fact that the Trump administration and its Republican allies in Congress are pushing for new legal limits on state AI laws indicates that they recognize that current laws do not meaningfully limit those laws.

The language in the House versions of several recently announced online safety bills is significantly different and could pose major hurdles to the passage and enforcement of a wide range of state privacy, consumer protection, competition, and other laws, including those specifically focused on AI. The current House proposal would override and block a wide range of state authorities because it includes a preemption clause that prohibits all states from enacting or enforcing any law, regulation, etc. “relating to the provisions” of the bill (including KOSA and COPPA 2.0, as amended).

Because nearly all regulation of technology companies is “linked” to data and design practices, this type of preemptive action would have a truly fundamental and unpredictable impact on states’ ability to regulate technology companies and services. All state laws enacted to protect privacy or protect children online would be repealed and replaced with weaker federal standards that Congress would include in the final bill. That means courts could strike down state privacy provisions if Congress passes the revised COPPA 2.0, and courts could strike down state online safety provisions if Congress passes the revised KOSA. Because courts rely on Congress’s intent when interpreting the scope of the Preemption Clause, the fact that lawmakers have made clear their goal of creating one federal rulebook makes it likely that courts will strike down a wide range of state laws.

All individuals and organizations dedicated to protecting individuals from abusive and harmful conduct online should speak out against efforts by the White House and members of Congress to undermine important national protections and rights. Americans deserve well-considered policy decisions from their leaders. This is the opposite.