The Hugging Face Dataset Library not only provides access to over 70K published datasets, but also provides a very convenient data preparation pipeline for custom datasets.

Renumics Spotlight allows you to create interactive visualizations to identify critical clusters in your data. Spotlight understands the data semantics that hug face datasets, so you can start with just one line of code.

Import Dataset

from renumics Import Spotlight ds = datasets.load_dataset(“speech_commands”, “V0.01”split =“verification”)Spotlight.show (DS)

Spotlights allow you to take advantage of model results such as predictions and embedding to provide a deeper understanding of data segments and model failure modes.

ds_results = datasets.load_dataset(‘Renumics/speech_commands-ast-finetuned-results’, “V0.01”split =“verification”)ds = datasets.concatenate_datasets((ds, ds_results),axis =1)Spotlight.show(ds,dtype = {“embedded”:spotlight.embeding}, layout = spotlight.layouts.debug_classification(embedding=“embedded”,INSPECT = {‘audio’:spotlight.dtypes.audio_dtype}))

Data inspection is a very important task in almost every ML development stage, but can be very time consuming.

“Manual data inspections probably have the maximum activity value and press-press ratio in machine learning.” – Greg Brockman

Spotlight helps make data inspection more scalable along two dimensions: setting up and maintaining custom data inspection workflows, and inspecting related data samples and clusters. The following sections provide some examples based on the embedding of face datasets.

Spotlight 🤝 hugging face dataset

The Dataset Library offers several features that make it an ideal tool for working with ML datasets. It stores tabular data (metadata, labels, etc.) along with unstructured data (images, audio, etc.) in a typical arrow table. Datasets also describe important data semantics through features (images, audio, etc.) and additional task-specific metadata.

Spotlight works directly at the top of the DataSets library. This means that there is no need to copy or preprocess the dataset for data visualization and inspection. Spotlight loads tabular data into memory to enable efficient client-side data analysis. Memory-intensive, unstructured data samples (audio, images, videos, etc.) are loaded on demand and lazy. In most cases, data types and label mappings are inferred directly from the dataset. Here we visualize the CIFAR-100 dataset in one line of code.

ds = datasets.load_dataset(“CIFAR100”split =‘test’)Spotlight.show (DS)

If the data type is ambiguous or not specified, it can be manually assigned in the Spotlight API.

label_mapping = Dict(Zip(ds.features)‘wine_label’).NAMES, range(Ren(ds.features)‘wine_label’) .NAMES)))) SPOTLIGHT.SHOW(DS, DTYPE = {“IMG”:spotlight.image, ‘wine_label’:spotlight.dtypes.categorydtype(categories = label_mapping)})

Use model results for data inspection

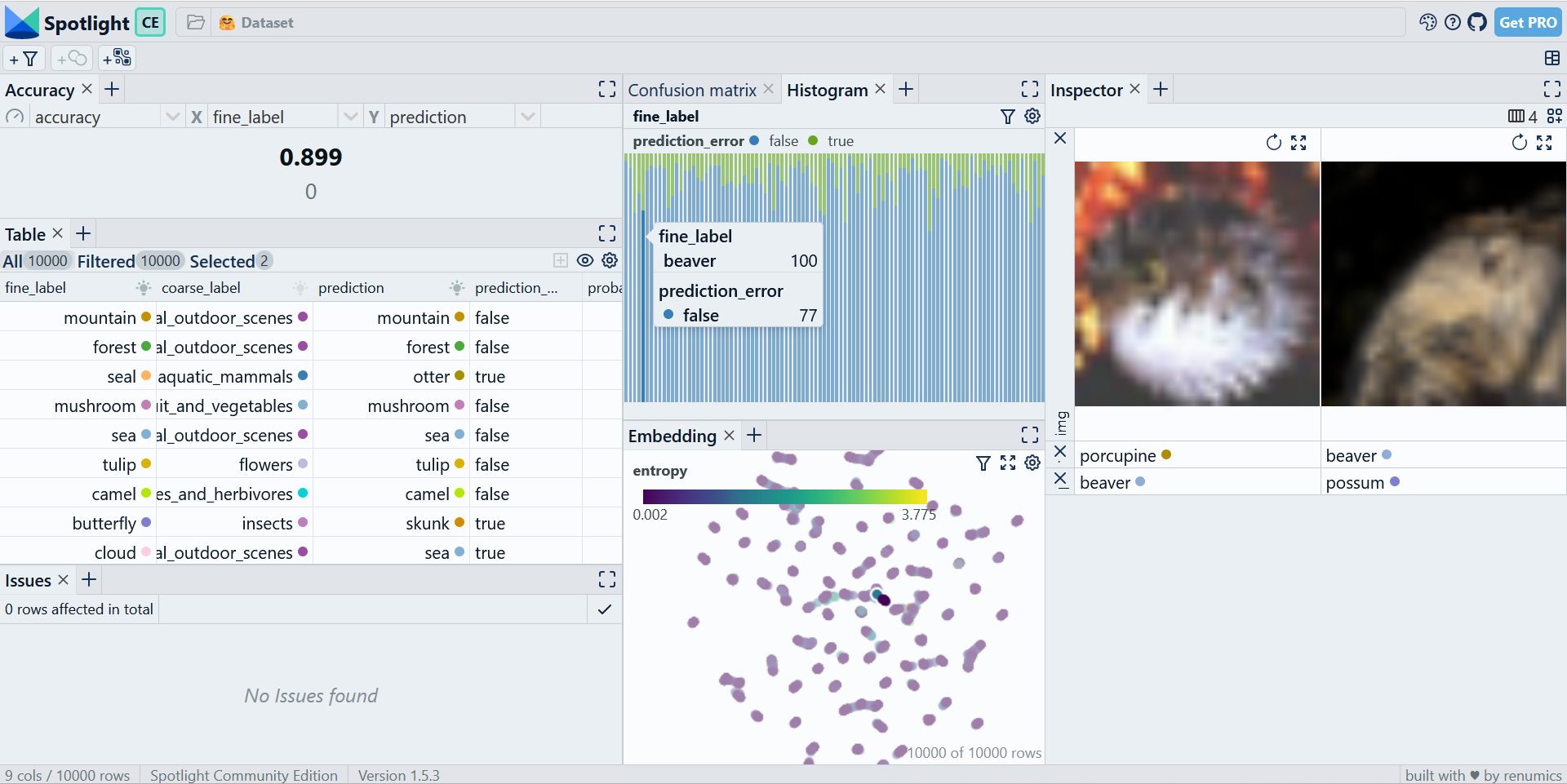

Exploring raw, unstructured datasets often provides little insight. Using model results such as predictions and embeddings can help you discover important data samples and clusters. Spotlight has several visualization options (similarity maps, confusion matrix, etc.) that specifically use model results.

It is recommended to save the results of your prediction directly into a hugging face dataset. This not only allows you to take advantage of the batch processing capabilities of the dataset library, but also preserves label mapping.

Trans library can be used to calculate embedding and prediction for CIFAR-100 image classification problems. Install the library via PIP.

PIP Install Renumics-Spotlight Dataset Transformer (Torch)

Now you can calculate the concentration.

Import torch

Import Trans device = torch.device(“cuda:0” if torch.cuda.is_available() Other than that “CPU”)model_name = “Ahmed9275/vit-cifar100”

processor = transformers.vitimageProcessor.from_pretrained(model_name)cls_model = transformers.vitforimageclassification.from_pretrained(model_name).o(device)fe_model = transformers.vitmodel.from_pretrained(model_name).to(device).to(device).

def Speculation(batch): images =(image.convert(“RGB”)) for image in batch) inputs = processor(images = images, return_tensors =“PT”).TO (device)

and torch.no_grad(): outputs = cls_model(** inputs) probs = torch.nn.functial.softmax(outputs.logits, dim = –1).CPU(). numpy() embeddings = fe_model(** inputs).last_hidden_state(:,, 0).CPU(). numpy() preds = probs.argmax(axis = –1))

return {“prediction”:Preds, “embedded”:Embedded} feature = datasets.features({** ds.features, “prediction”:ds.features(“fine_label”),, “embedded”: Dataset.order(feature = datasets.value(“float32”), length =768)})ds_enriched = ds.map(Supposed, input_columns =“IMG”batched =truthbatch_size =2,function = function)

If you do not want to perform a complete inference execution, you can follow this tutorial with the CIFAR-100 precomputed model results.

ds_results = datasets.load_dataset(“Renumics/Spotlight-Cifar100-Enrichment”split =‘test’)ds_enriched = datasets.concatenate_datasets((ds, ds_results),axis =1))

Results can now be used to interactively explore related data samples and clusters in the spotlight.

Layouts = spotlight.layouts.debug_classification(label =‘wine_label’,Embedded =“embedded”,INSPECT = {“IMG”:spotlight.dtypes.image_dtype})spotlight.show(ds_enriched,dtype = {{“embedded”:spotlight.embedding},layout = leaout)

Customizing the Data Inspection Workflow

Visualization layouts can be interactively modified, saved and loaded via the GUI. There are a variety of widget types and configurations to choose from. The Inspector widget can represent multimodal data samples containing text, images, audio, video, and time series data.

You can also define the layout via the Python API. This option is particularly useful for building custom data inspection and curation workflows, including EDA, model debugging, and model monitoring tasks.

In combination with the Data Issues widget, the Python API provides a great way to integrate existing script results (such as data quality checks and model monitoring) into a scalable data inspection workflow.

Use spotlight on hugging face hub

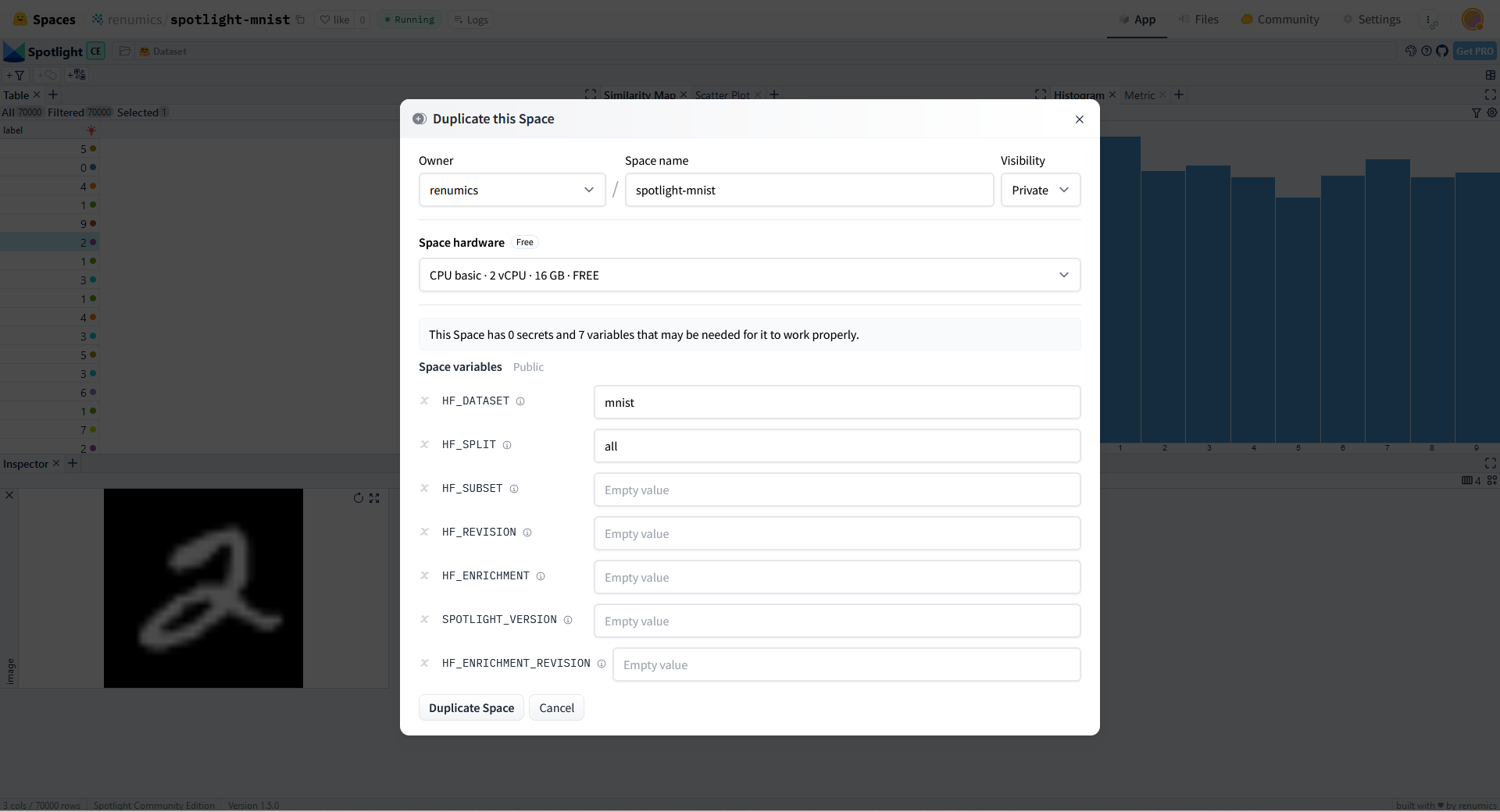

Spotlights can be used directly on local NLP, audio, CV, or multimodal datasets. If you want to showcase the results of a dataset or model in the face hub of a hug, you can use the face space to activate a spotlight visualization.

We already have space for many popular NLP, audio and CV datasets on our hub. Simply replicate one of these spaces and specify the dataset in the hf_dataset variable.

Optionally, you can select a dataset that contains model results and other configuration options such as splitting, subsets, or dataset revisions.

What’s next?

Spotlights allow you to create interactive visualizations and leverage data enrichment to identify key clusters of face datasets to hug. In this blog, we have seen examples of both audio ML and computer vision.

You can use Spotlight directly to explore and curate NLP, audio, CV, or multimodal datasets.