A potential consistency model (LCM) is a way to reduce the number of steps required to generate a stable diffusion (or SDXL) image by distilling the original model to another version (4-8 instead of the original 25-50). Distillation is a type of training procedure that attempts to reproduce the output from a source model using a new model. Distillation models may be designed to be small (for Distilbert or recently released Distil-Whisper), or in this case they may be designed to have fewer steps to take. Usually it is a long and expensive process that requires a huge amount of data, patience and some GPUs.

Well, that was what it was before today!

We are pleased to announce a new way to make SDXL faster, essentially stable diffusion and essentially stable diffusion, as if distilled using the LCM process! How does it sound like running an SDXL model in about 1 second, not 7 on the 3090, not 10 times faster on the MAC? Read more!

content

Method Overview

So, what is the trick? For potential consistency distillation, each model must be distilled individually. The core idea of LCM LORA is to train only a few adapters known as the Lora layer, rather than a complete model. The resulting lora can be applied to fine-tuned versions of the model without distilling individually. If you’re itching to see what this actually looks like, jump to the next section and play it with your inference code. If you want to train your own Lola, this is the process you use.

Select the available teacher models from the hub. For example, you can use SDXL (base), or a finely tuned or Dream Booth version of your choice. Training LCM Lora on a model. Lora is a kind of performance-efficient tweak or PEFT, and is much cheaper than fine-tuning a complete model. For more information about PEFT, please see this blog post or the Diffusers LORA documentation. Use LORA with SDXL diffusion model and an LCM scheduler. bingo! You can get high quality inference in just a few steps.

Download the paper for more information about the process.

Why is this important?

Stable diffusion and fast inference in SDXL enable new use cases and workflows. To give some names:

Accessibility: Generation tools can be used effectively by more people even if you don’t have access to modern hardware. Faster Iteration: Get more images and multiple variations in some time! This is great for artists and researchers. Whether for personal or commercial purposes. Various accelerators, including CPUs, may allow for production workloads. Cheaper image generation service.

To measure the difference in speeds we are talking about, it takes about a minute to generate a single 1024×1024 image on an M1 MAC with SDXL (base). With the LCM Lora, you can get great results in just 6 seconds (4 steps). This is a few orders of magnitude faster and not having to wait for results is a game changer. Using the 4090 gives you an almost instantaneous response (less than 1 second). This will disable SDXL in applications where real-time events are required.

High-speed inference with SDXL LCM LORAS

The Diffusers version released today makes LCM LORAS easier to use.

from Diffuser Import Diffusionpipeline, lcmscheduler

Import Torch Model_id = “stabilityai/stable-diffusion-xl-base-1.0”

lcm_lora_id = “Potential meaningless/LCM-LORA-SDXL”

pipe = diffusionpipeline.from_pretrained(model_id, variant =“FP16”)pipe.load_lora_weights(lcm_lora_id)pipe.scheduler = lcmscheduler.from_config(pipe.scheduler.config)pipe.to(device=“cuda”,dtype = torch.float16)prompt = “Close-up photo of an elderly man standing in the rain at night, lamp, in the street illuminated by a Leica 35mm summilux.”

Images = pipe(prompt = prompt, num_inference_steps =4,Guidance_scale =1,).images(0))

Note the way you code:

Instantiate a standard diffusion pipeline using the SDXL 1.0 base model. Apply the LCM Lora. Change the scheduler to LCMSCheduler. This is what is used in potential consistency models. that’s it!

This will result in the following full resolution image:

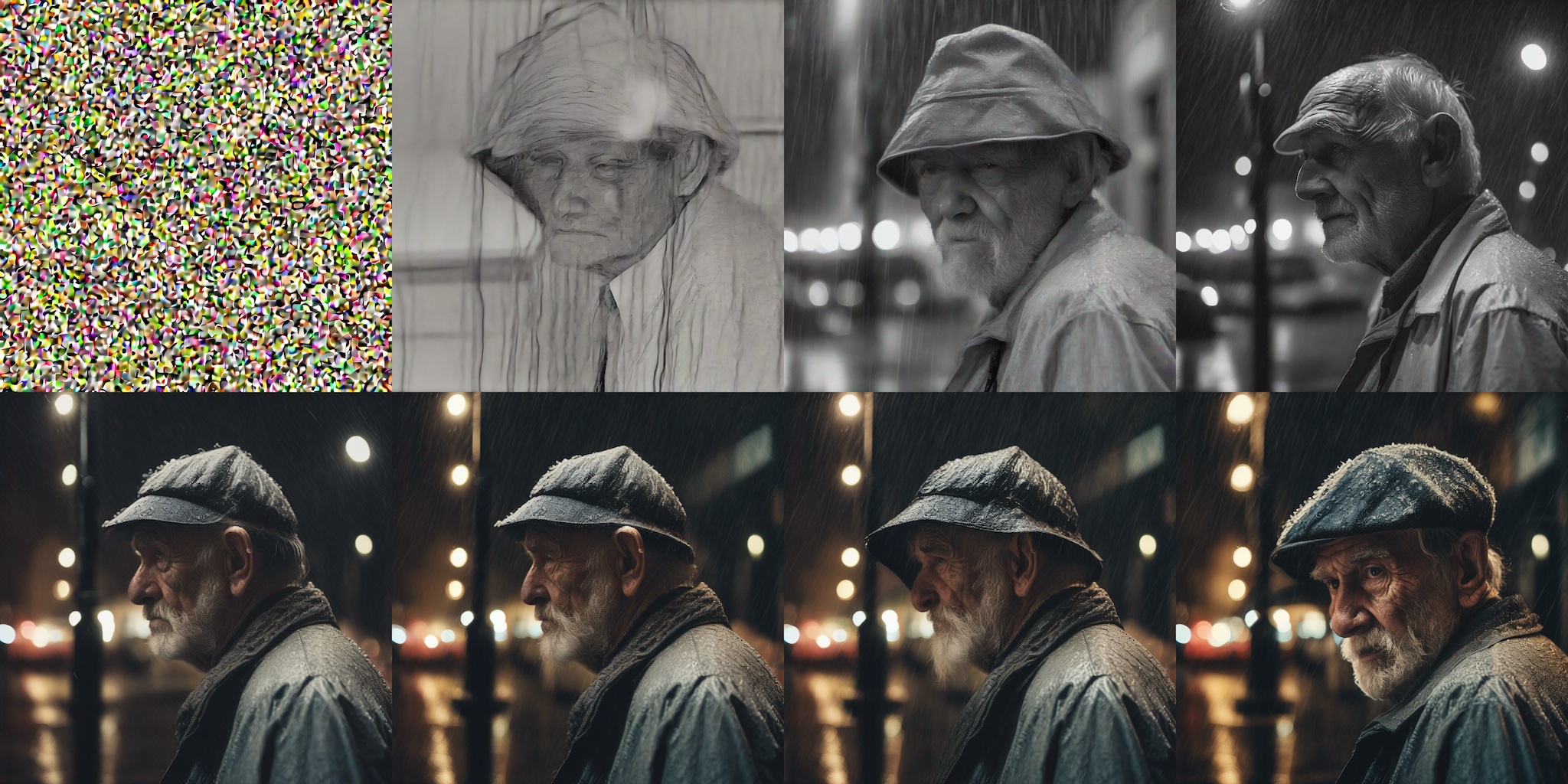

Images generated in SDXL in 4 steps using LCM LORA.

Quality comparison

Let’s see how the number of steps affects the quality of production. The following code generates the image with inference steps 1-8.

Image = ()

for Steps in range(8): generator = torch.generator(device = pipe.device).manual_seed(1337)image = pipe(prompt = prompt, num_inference_steps = step+1,Guidance_scale =1generator = generator, ).images(0) Image.Append (Image)

These are the eight images that will be displayed in the grid.

LCM Lora generation with 1-8 steps.

As expected, using only one step will produce an approximate shape with no discernible features or textures. However, the results improve quickly and are usually very satisfying in just 4-6 steps. Personally, I can tell that the 8-step image from the previous test is a bit too saturated and is “comic” to my taste. So in this example, we choose between five or six steps. The generation is very fast so you can create different variations using just 4 steps, and select and repeat the variation of your choice, using several more steps and sophisticated prompts if you want.

Guidance Scale and Negative Prompts

Note that in the previous example we used Guidance_scale of 1. This effectively disables. This works well at most prompts and is the fastest, but ignores negative prompts. You can also consider using negative prompts by providing guidance scales between 1 and 2. I found that large values don’t work.

Quality vs Base SDXL

From a quality perspective, how does this compare to the standard SDXL pipeline? Let’s take a look at an example!

You can quickly revert the pipeline to a standard SDXL pipeline by lowering the LORA weight and switching to the default scheduler.

from Diffuser Import eulerdiscretescheduler pipe.unload_lora_weights()pipe.scheduler = eulerdiscretescheduler.from_config(pipe.scheduler.config)

You can then perform the inference as normal in SDXL. Collect results using different steps.

Image = ()

for Steps in (1, 4, 8, 15, 20, twenty five, 30, 50): generator = torch.generator(device = pipe.device).manual_seed(1337)image = pipe(prompt = prompt, num_inference_steps = stept, generator = generator,).images(0) Image.Append (Image)

Use SDXL pipeline results (same prompt and random seed), 1, 4, 8, 15, 20, 25, 30, and 50 steps.

As you can see, the image in this example is of little use up to ~20 steps (second row), and the quality still increases significantly with more steps. The details of the final image are amazing, but it took 50 steps to get there.

LCM Rola with other models

This technique also works with other finely tuned SDXL or stable diffusion models. To demonstrate, let’s look at how to use DreamBooth to perform inferences about collage diffusion, a fine-tuned model from stable diffusion v1.5.

This code is similar to the code seen in the previous example. Load fine-tuned models and load LCM Rora suitable for stable diffusion v1.5.

from Diffuser Import Diffusionpipeline, lcmscheduler

Import Torch Model_id = “Wavymulder/Collage Diffusion”

lcm_lora_id = “Potential meaningless/LCM-LORA-SDV1-5”

pipe = diffusionpipeline.from_pretrained(model_id, variant =“FP16”)pipe.scheduler = lcmscheduler.from_config(pipe.scheduler.config)pipe.load_lora_weights(lcm_lora_id)pipe.to(device=“cuda”,dtype = torch.float16)prompt = “A collage style kid sits looking at the night sky full of stars.”

generator = torch.generator(device = pipe.device).manual_seed(1337) Image = pipe(prompt = prompt, generator = generator, neutral_prompt = negative_prompt, num_inference_steps =4,Guidance_scale =1,).images(0)image

LCM LORA technology with Dreambooth Stable Diffusion V1.5 model allows for four stages of inference.

Full diffuser integration

By integrating LCM with Diffusers, you can take advantage of many of the features and workflows that are part of the Diffusers toolbox. for example:

It supports Mac using Apple Silicon. Memory and performance optimizations such as Flash Anteress and Torch.comPile(). Additional memory storage strategies for low RAM environments including offloading of models. Workflows such as control nets and images. Training and fine tuning scripts.

benchmark

This section is not intended to be exhaustive, but shows the generation rates achieved on various computers. Let’s once again highlight how much freeing it is to explore image generation so easily.

Hardware SDXL LORA LCM (4 Steps) SDXL Standard (25 Steps) MAC, M1 MAX 6.5S 64S 2080 TI 4.7S 10.2S 3090 1.4S 7S 4090 0.7S 3.4S T4 (Google Colab Free Tier) 8.4S 26.5S A100 (80 GB) 1.2S 3.8S 3.8S 3.8S) 29S219S

These tests were run in all cases with one batch size using this script by Sayak Paul.

For cards with many capacity, such as the A100, generating multiple images at once will greatly improve performance. This is usually the case for production workloads.

LCM LORAS and models released today

Bonus: Combine LCM LORA with regular SDXL LORAS

Using Diffusers + PEFT integration, you can combine LCM Lora with regular SDXL LORA, providing a superpower for performing LCM inference in 4 steps.

Here we combine ciron2022/toy_face lora and lcm lora.

from Diffuser Import Diffusionpipeline, lcmscheduler

Import Torch Model_id = “stabilityai/stable-diffusion-xl-base-1.0”

lcm_lora_id = “Potential meaningless/LCM-LORA-SDXL”

pipe = diffusionpipeline.from_pretrained(model_id, variant =“FP16”)pipe.scheduler = lcmscheduler.from_config (pipe.scheduler.config)pipe.load_lora_weights (lcm_lora_id)pipe.load_lora_weights (“ciron2022/Toy Face”,weight_name =“toy_face_sdxl.safetensors”adapter_name =“toy”)pipe.set_adapters((()“Lora”, “toy”), adapter_weights = (1.0, 0.8)) pipe.to(device =“cuda”,dtype = torch.float16)prompt = “Toy_face Man”

negial_prompt = “Blurred, low quality, rendered, 3D, oversaturated”

Images = pipe(prompt = prompt, negial_prompt = negial_prompt, num_inference_steps =4,Guidance_scale =0.5,).images(0)image

Standard and LCM Rora were combined for high speed (4-step) inference.

Need some ideas to explore some Lora? Check out the experimental Lora The Explorer (LCM version) space to test out the amazing work from the community and get inspiration!

How to Train LCM Models and LORAs

As part of today’s Diffusers release, we provide training and tweak scripts developed in collaboration with the authors of the LCM team. The user allows:

Perform stable diffusion or full model distillation of SDXL models on large datasets such as LAION. Training LCM LORAS. This is a much easier process. As shown in this post, you can also perform high-speed inference with stable diffusion without distillation training.

Check Repo’s SDXL or stable diffusion instructions for more information.

We hope these scripts will encourage the community to try their own tweaks. Let us know if you want to use it for your project!

resource

credit

The surprising work of the potential consistency model was performed by the LCM team. Check the code, reports and paper. The project is a collaboration with the Diffusers team, the LCM team and community contributor Daniel Gu. We believe it is a testament to the possibility of open source AI, the cornerstone that enables researchers, practitioners and trollers to explore and collaborate on new ideas. We would also like to thank @MadeByollin for our continued contributions to the community, including the Float16 autoencoder used in our training scripts.