![]()

Update (02/2024): Performance has been improved even more! Check out the updated benchmarks.

In an earlier post on the Hugging Face blog, we introduced AWS Imedentia2, a second-generation AWS Ersentia Accelerator, and explained how to quickly deploy a hugging face model of standard text and vision tasks for AWS Iserencia 2 instances using Optimum-Neuron.

A further step in integration with the AWS Neuron SDK allows you to use 🤗Optim-Neuron to deploy LLM models for text generation in AWS Imeferentia2.

And what better model to choose for its demo than the Llama 2, one of the most popular models in the hugging facehub?

SETUP 🤗 Optimal Neurons for Irsentia2 Instances

Our recommendation is to use embracing facial neuron deep learning ami (dlami). Dlami is pre-packaged with all the libraries you need, including optimal neurons, neuron drivers, transformers, datasets, acceleration and more.

Alternatively, you can deploy it to Amazon Sagemaker using the embracing Face Neuron SDK DLC.

Note: Stay tuned for future posts dedicated to the development of Sagemaker.

Finally, these components can also be manually installed on a fresh recommended instance following the optimal neuron installation procedure.

Export the llama 2 model to a neuron

As explained in the Optimum-Neuron documentation, the model must be compiled and exported to a serialized format before running on the neuronal device.

Fortunately, Optimum-Neuron offers a very simple API to export standard Transformers models to neuronal format.

>>> Import from optimum.neuronNeuronModelforcausallm >>> compiler_args = {“num_cores”: 24, “auto_cast_type”: ‘fp16’} >>> input_shapes = {“batch_size”: 1, “sequence_length”: 2048} NeuronModelforcausallm.from_pretrained(“Meta-llama/llama-2-7b-hf”, export = true, ** compiler_args, ** input_shapes)

This deserves a bit of explanation:

Use Compiler_Args to specify the number of cores to expand the model (each neuronal device has two cores), and use input_shape to set the dimensions of the static input and output of the model using which precision (here float16). All model compilers require static shapes, and neurons are no exception. Note that Sequence_Length constrains not only the length of the input context, but also the length of the KV cache, and therefore the output length.

Depending on the selected parameters and the selection of estimated hosts, this can take a few minutes to an hour or more.

Luckily, you’ll need to do this only once, as you can save the model and reload it later.

>>> model.save_pretrained(“a_local_path_for_compiled_neuron_model”)

Better yet, you can shove it into the hugging face hub.

>>> model.push_to_hub(“a_local_path_for_compiled_neuron_model”, repository_id = “aws-neuron/llama-2-7b-hf-neuron-latency”))

Generate text using llama 2 in AWS recommendations

Once the model has been exported, you can generate text using the Transformers library, as explained in detail in the previous post.

>>> Import from optimum.neuronNeuronmodelforcausallm >>>> Import from transformer >>> model = neuronmodelforcausallm.from_pretrained(‘aws-neuron/llama-2-7b-hf-neuron-latency’) >>> autotokenizer.from_pretrained(‘aws-neuron/llama-2-7b-hf-neuron-latency’) >>> inputs = tokenizer(‘What is deep-rearning?’, return_tensors = “pt”) >>> outputs = model.generat top_p = 0.9) >>>> tokenizer.batch_decode(outputs, skip_special_tokens = true)(‘What is deep learning?

Note: If you pass multiple input prompts to the model, the resulting token sequence must be padded to the left with the end-of-stream token. The tokensor saved in the exported model is configured accordingly.

The following generation strategies are supported:

Greedy search, multinomial sampling using TOP-K and TOP-P (temperature).

Most logit preprocessing/filters (such as repeat penalties) are supported.

All-in-one with the optimal neuronal pipeline

For those who want to keep it simple, there is an even easier way to use the LLM model with AWS Imedentia 2 using Optim-Neuron Pipelines.

Using them is as simple as:

>>> optimum.neuron Import Pipeline >>> p = pipeline(‘Text-generation’, ‘aws-neuron/llama-2-7b-hf-neuron-budget’) >>> p(“max_new_tokens=64, do_keentex” ‘place” bevance of ” on my sample=’ max_k=50) (max_new_tokens=64, I’m a peaceful place, I love to see new places.

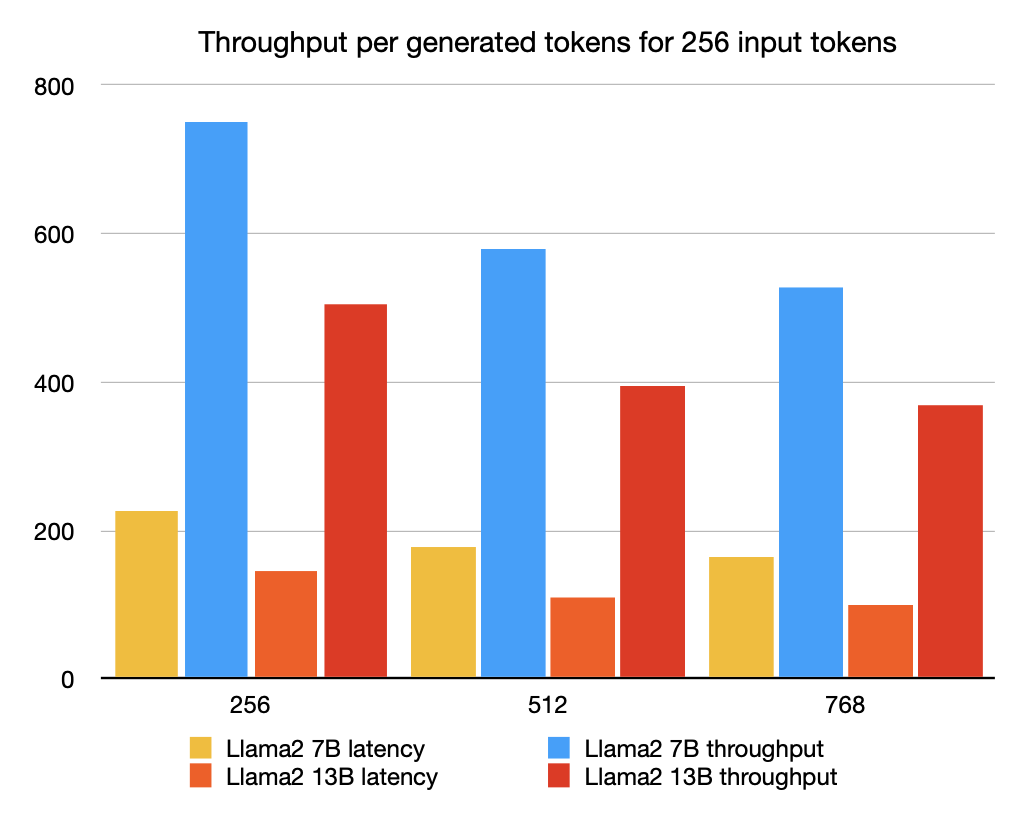

benchmark

But how efficient is text generation in ImedEntia2? Let’s understand!

I uploaded it to precompiled versions of the hubs on the Llama 2 7B and 13B models with various configurations.

Note: All models are compiled with a maximum sequence length of 2048.

The “budget” model of LLAMA2 7B is intended to be deployed to an Inf2.xlarge instance with only one neuronal device and sufficient CPU memory to load the model.

All other models are compiled to use the full range of cores available on the inf2.48xlarge instance.

Note: For more information about available instances, see the Imeferntia2 product page.

I’ve created two “latency” oriented configurations: the Llama2 7B and Llama2 13B models that can provide only one request at a time at full speed.

I also created two “throughput” oriented configurations to provide up to four requests in parallel.

To evaluate the model, we generate tokens up to a total sequence length of 1024 starting with 256 input tokens (i.e., we generate 256, 512, and 768 tokens).

Note: The “Budget” model number is reported, but is not included in the graph for easier readability.

Encoding time

The encoding time is the amount of time required to process the input token and generate the first output token. This is a very important metric as it accommodates the latency that users directly perceive when streaming generated tokens.

We test encoding times for increasing the context size, 256 input tokens that correspond roughly to typical Q/A usage, while 768 is more typical for search extended generation (RAG) use cases.

The “Budget” model (LLAMA2 7B-B) is deployed to an inf2.xlarge instance, while the other models are deployed to an inf2.48xlarge instance.

The encoding time is expressed in seconds.

Input token llama2 7b-l llama2 7b-t llama2 13b-l llama2 13b-t llama2 7b-b 256 0.5 0.9 0.6 1.8 0.3 512 0.7 1.6 1.1 3.0 0.4 768 1.1 3.3 1.7 5.2 0.5

It can be seen that all models deployed exhibit excellent response times, even in long contexts.

End-to-end latency

The end-to-end latency corresponds to the total time to reach a sequence length of 1024 tokens.

Therefore, encoding and generation times are included.

The “Budget” model (LLAMA2 7B-B) is deployed to an inf2.xlarge instance, while the other models are deployed to an inf2.48xlarge instance.

Latency is expressed in seconds.

New token llama2 7b-l llama2 7b-t llama2 13b-l llama2 13b-t llama2 7b-b 256 2.3 2.7 3.5 4.1 15.9 512 4.4 5.3 6.9 7.8 31.7 768 6.2 7.7 10.2 11.1 47.3

All models deployed on high-end instances show good latency, even those actually configured to optimize throughput.

The “budget” for model latency has been deployed significantly, but that’s fine.

throughput

We employ the same conventions as other benchmarks to assess throughput by dividing the end-to-end latency by the sum of both the input and output tokens. In other words, divide the end-to-end latency by batch_size * sequence_length to get the number of generated tokens in 1 second.

The “Budget” model (LLAMA2 7B-B) is deployed to an inf2.xlarge instance, while the other models are deployed to an inf2.48xlarge instance.

Throughput is expressed in tokens per second.

New token llama2 7b-l llama2 7b-t llama2 13b-l llama2 13b-t llama2 7b-b 256 227 750 145 504 32 512 177 579 111 394 24 768 164 529 101 370 22 22 22

Again, models deployed on high-end instances have very good throughput, even those optimized for latency.

The throughput of the “budget” model is much lower, but it’s still fine in streaming usage cases, given that the average reader reads around 5 words per second.

Conclusion

We have shown how easy it is to deploy a llama2 model from a hugging hugging face hub using hugging face hub.

The deployed model shows excellent performance in terms of encoding time, latency and throughput.

Interestingly, the latency of the deployed model is not so sensitive to batch size, opening up a way of deployment at inference endpoints that provide multiple requests in parallel.

However, there is still plenty of room for improvement:

In the current implementation, the only way to increase throughput is to increase batch size, but is currently limited by device memory. Currently, alternative options such as pipelining are integrated, and the length of the static sequence limits the model capabilities to encode long contexts. It will be interesting to see if attention sinks are a valid option to address this.