Computer scientists have advanced technology from text to video with one of nature’s most challenging displays: a system that changes over time. While it may seem easy to see the rising flowers and bread, creating realistic videos of these events has been a stubborn obstacle for artificial intelligence. It’s now changing thanks to a new model called Magictime.

New path for video generation

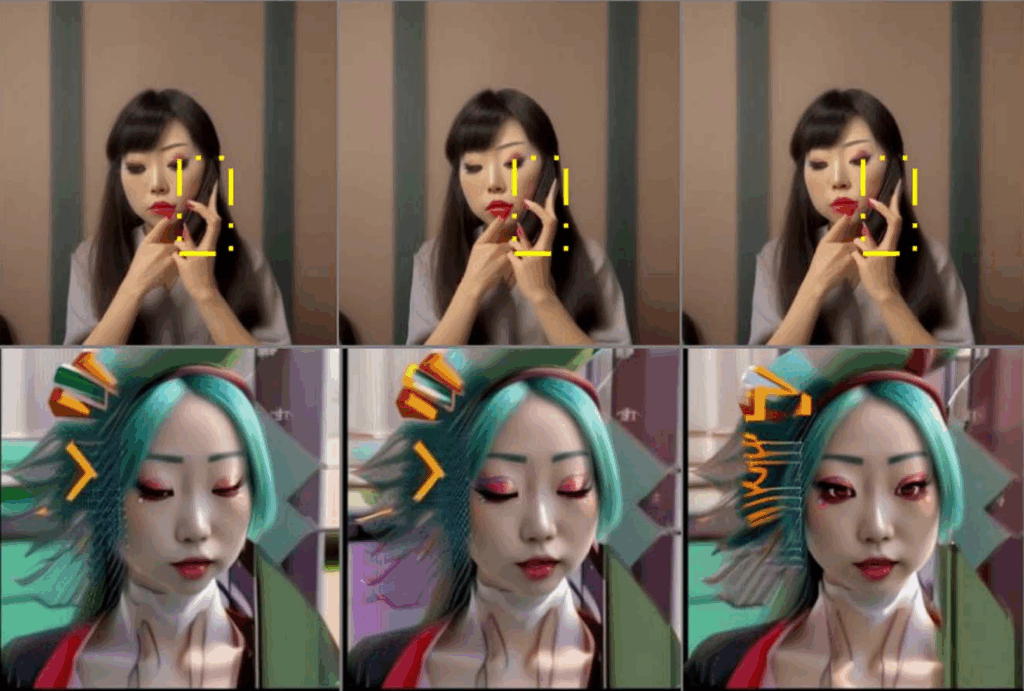

The text-to-video system is rapidly advancing, but lacking in real physics capture. When asked to generate transformations, these systems are unable to demonstrate compelling movement or diversity. Instead, they produce hard-looking videos, lacking the natural flow you would expect from time-lapse footage.

“Artificial intelligence was developed to understand the real world and simulate the activities and events that occur,” says Jinfa Huang, a doctoral student in Rochester, overseen by Professor Jiebo Luo. “Magic is a step towards AI that can better simulate the physical, chemical, biological, or social properties of the world around us.”

Learn from time-lapse videos

To teach the system how the real world unfolds, researchers built a data set called Chronomagic. Includes over 2,000 time-lapse clips paired with detailed captions. These videos capture the growth, collapse and structure of movement, and provide examples of how the system actually changes over time.

Magictime uses a layered design to process this information. First, a two-stage adaptation process allows the system to encode patterns of changes and adjust the model from pre-trained text to video. Second, a dynamic frame extraction strategy allows the model to focus on the moments of maximum fluctuations, essential to a slow and dramatic learning process.

A special text encoder adds even more precision. By better interpreting written prompts, the system can link descriptive words to the appropriate type of visual transformation. Together, these works allow magimime to generate more persuasive sequences.

Early abilities and potential uses

The current open source version of the system produces a short clip of just 2 seconds with 512 by-512 pixels and eight frames. The upgraded architecture scales this to 10 seconds. The clips are short, but can capture events such as trees germinate, flowers unfolding, or large quantities of bread blowing swelling in the oven.

The results are impressive compared to previous models. In contrast, Magictime produces a richer transformation that is closer to what is expected of real life.

For now, this technology is both practical and practical. In the public demonstration, you can enter a prompt to see the system come true. However, researchers view it as not merely novel. They see it as an early step into science tools that can make research faster.

“We hope that one day, for example, biologists can use generated videos to speed up preliminary investigations of ideas,” explains Huang. “While physics experiments remain essential for final verification, accurate simulations can shorten the iteration cycle and reduce the number of live trials needed.”

Beyond biology

The model shines through biological processes such as growth and metamorphosis, but its use could expand even further. Construction is one clear example. Buildings rising from foundations or assembled bridges can be simulated in stages. Food Science also offers rich ground with processes such as rising dough, aging cheese, and setting chocolate.

The fundamental idea is that if AI can understand how material changes, it will allow us to express more of the physical world. This opens a path to the model that not only mimics the appearance, but also captures the dynamics. By simulating real transformations, researchers can predict results, explore possibilities, and communicate complex ideas through visual media.

Scientific promise

The video is still short and lacks the full realism of the actual footage, but their promise lies in what they signal the future. As computing power grows and datasets grow, systems like Magictime can evolve into powerful simulators. Imagine scientists testing architects previewing how coral reefs grow under different climate scenarios, or how architects weather over decades.

The field of text-to-video is moving forward, and adding real physics to these systems could be the next milestone.

The success of Magictime shows that by grounding AI in a natural process, it is possible to move beyond the static image and begin to capture the pulsation of the change itself.

Related Stories